Web Scraping Financial Data

Web scraping for financial statements with Python — 1

ProcessingHere is a simple trick you can flexibly adjust the stock symbol and plug it into the URL link. It will come in handy later if you want to extract hundreds of company’s financial statements. # Enter a stock symbolindex= ‘MSFT’# URL link url_is = ‘’ + index + ‘/financials? p=’ + indexurl_bs = ‘’ + index +’/balance-sheet? p=’ + indexurl_cf = ‘’ + index + ‘/cash-flow? p=’+ indexNow we have the URL link saved. If you manually open them on a Web browser, it will look like the URLNext, we just need to open the link and read it into a proper format called lxml. Simple ad_data = ur. urlopen(url_is)() soup_is= BeautifulSoup(read_data, ’lxml’)Well, if you open soup_is, it will look like a mess because the elements were originally in HTML format. All elements are systemically arranged in how do know which classes the relevant data are stored in? After a few searches, we know that they are stored in“div”, we can create an empty list and use a for loop to find all elements and append them to the [] # Create empty listfor l in nd_all(‘div’): #Find all data structure that is ‘div’ () # add each element one by one to the list ls = [e for e in ls if e not in (‘Operating Expenses’, ’Non-recurring Events’)] # Exclude those columnsYou will find that there are a lot of “none” elements in ls because not all “div” has an element. We just need to filter those w_ls = list(filter(None, ls))And now it looks like we take a step further and start reading the list starting in the 12th w_ls = new_ls[12:]Well, now we have a list. But how do we turn it into a data frame? First, we need to iterate 6 items at a time and store them in tuples. However, we want a list so that the pandas library can read it into a data _data = list(zip(*[iter(new_ls)]*6))Perfect, that is exactly what we want. Now, we just have to read it into a data come_st = Frame(is_data[0:])Sweet. It is almost done. We just need to read the first row as the column and the first column as the row index. Here are some lumns = [0] # Name columns to first row of dataframeIncome_st = [1:, ] # start to read 1st rowIncome_st = Income_st. T # transpose lumns = [0] #Name columns to first row of ([0], inplace=True) #Drop first index = ‘’ # Remove the index (index={‘ttm’: ‘12/31/2019’}, inplace=True) #Rename ttm in index columns to end of the yearIncome_st = Income_st[lumns[:-5]] # remove last 5 irrelevant columnsAfter using the same techniques for the Income statement, balance sheet and cash flow, your Data Frames should look like the StatementBalance SheetCash flow statementAfter doing a transpose on the DataFrames, DateTime is turned into a row index and the features become column are some afterthought questions:How are the features correlated to the stock price of a company? How do you find out if they are correlated? If so, which time period of stock price are related to the features from our financial statements? What else can you do with the extracted data on developing algorithmic trading model? Feel free to leave your answers and comments below at the response to check if you can come up with some unique you for reading this article, feel free to share it if you find it useful. Here is another article on utilizing the new DataFrame and do further financial accounting analysis with Python.

3 Ways to Scrape Financial Data WITHOUT Python | Octoparse

Financial market is a place of risks and instability. It’s hard to predict how the curve will go and sometimes, for investors, one decision could be a make-or-break move. That’s why experienced practitioners never lose track of the financial data.

We human beings are wired to see in short term. Unless we have a database with data in well structure, we are not able to get a handle on voluminous information. Data scraping is the solution that gets complete data at your fingertip.

Table of Contents

What We Are Scraping When We Scrape Financial Data?

Why Scrape Financial Data?

How to Scrape Financial Data without Python

Let’s get started!

When it comes to scraping financial data, stock market data is in the spotlight of attention. But there’s more, trading prices and changes of securities, mutual funds, futures, cryptocurrencies, etc. Financial statements, press releases and other business-related news are also sources of financial data that people will scrape.

Financial data, when extracted and analyzed in real time, can provide wealthy information for investments and trading. And people in different positions scrape financial data for varied purposes.

Stock market prediction

Stock trading organizations leverage data from online trading portals like Yahoo Finance to keep records of stock prices. This financial data help companies to predict the market trends and buy/sell stocks for the highest profits. Same for trades in futures, currencies and other financial products. With complete data at hand, cross-comparison becomes easier and a bigger picture manifests.

Equity research

“Don’t put all the eggs in one basket. ” Portfolio managers do equity research to predict the performance of multiple stocks. Data is used to identify the pattern of their changes and further develop an algorithmic trading model. Before getting to this end, a vast amount of financial data will involve in the quantitative analysis.

Sentiment analysis of financial market

Scraping financial data is not merely about numbers. Things can go qualitatively. We may find that the presupposition raised by Adam Smith is untenable – people are not always economic, or say, rational. Behavioral economics reveals that our decisions are susceptible to all kinds of cognitive biases, plainly, emotions.

Using the data from financial news, blogs, relevant social media posts and reviews, financial organizations can perform sentiment analysis to grab people’s attitude towards the market, which can be an indicator of the market trend.

If you are a non-coder, stay tuned, let me explain how you can scrape financial data with the help of Octoparse. Yahoo Finance is a nice source to get comprehensive and real-time financial data. I will show you below how to scrape from the site.

Besides, there are lots of financial data sources with up-to-date and valuable information you can scrape from, such as Google Finance, Bloomberg, CNNMoney, Morningstar, TMXMoney, etc. All these sites are HTML codes in nature, which means that all the tables, news articles, and other texts/URLs can be extracted in bulk by a web scraping tool.

To know more about what web scraping is and what it is used for, you can check out this article.

There are 3 ways to get the data:

Use a web scraping template

Build your web crawlers

Turn to data scraping services

In order to help newbies get an easy start on web scraping, Octoparse offer an array of web scraping templates. These templates are preformatted crawlers ready-to-use. Users can pick one of them to pull data from respective pages instantly.

The Yahoo Finance template offered by Octoparse is designed to scrape the Cryptocurrency data. No more configuration is required. Simply click “try it” and you will get the table data in minutes.

In addition to Cryptocurrency data, you can also build a crawler from scratch in 2 steps to scrape world indices from Yahoo Finance. A customized crawler is highly flexible in terms of data extraction. This method is also workable to scrape other pages from Yahoo Finance.

Step 1: Enter the web address to build a crawler

The bot will load the website in the built-in browser, and one click on the Tips Panel can trigger the auto-detection process and get the table data fields done.

Step 2: Execute the crawler to get data

When your desired data are all highlighted in red, save the settings and run the crawler. As you can see in the pop-up, all the data are scraped down successfully. Now, you can export the data into Excel, JSON, CSV, or to your database via APIs.

3. Financial data scraping services

If you are scraping financial data from time to time in a rather small amount, help yourself with handy web scraping tools. You may find joy in building your own crawlers. However, if you are in need of voluminous data for a profound analysis, say millions of records, and have a high standard of accuracy, it is better to hand your scraping needs to a group of reliable web scraping professionals.

Why data scraping services deserve?

Time and energy-saving

The only thing you would bother is to convey clearly to the data service provider what data you want. Once this is done, the data service team will deal with the rest of all, no hassle. You can plunge into your core business and do what you good at. Let professionals get the scraping job done for you.

Zero learning curve & tech issues

Even the easiest scraping tool takes time to master. The ever-changing environment in different websites may be hard to deal with. And when you are scraping on a large scale, you may encounter issues such as IP ban, low speed, duplicate data, etc. Data scraping service can free you from these troubles.

No legal violations

If you are not paying enough attentions to the terms of service of the data sources you are scraping from, you may get yourself into trouble. With the support of experienced legal counsel, a professional web scraping service provider works in accordance with laws and the whole scraping process will be implemented in a legitimate manner.

Read more:

Cryptocurrency Market Analysis with Web Scraping

Scrape information from Yahoo Finance

Scrape Stock Info from Bloomberg

Video: Web Scraping | Cryptocurrency Market

Author: Milly

Edited by Cici

Web Scraping 101: 10 Myths that Everyone Should Know | Octoparse

1. Web Scraping is illegal

Many people have false impressions about web scraping. It is because there are people don’t respect the great work on the internet and use it by stealing the content. Web scraping isn’t illegal by itself, yet the problem comes when people use it without the site owner’s permission and disregard of the ToS (Terms of Service). According to the report, 2% of online revenues can be lost due to the misuse of content through web scraping. Even though web scraping doesn’t have a clear law and terms to address its application, it’s encompassed with legal regulations. For example:

Violation of the Computer Fraud and Abuse Act (CFAA)

Violation of the Digital Millennium Copyright Act (DMCA)

Trespass to Chattel

Misappropriation

Copy right infringement

Breach of contract

Photo by Amel Majanovic on Unsplash

2. Web scraping and web crawling are the same

Web scraping involves specific data extraction on a targeted webpage, for instance, extract data about sales leads, real estate listing and product pricing. In contrast, web crawling is what search engines do. It scans and indexes the whole website along with its internal links. “Crawler” navigates through the web pages without a specific goal.

3. You can scrape any website

It is often the case that people ask for scraping things like email addresses, Facebook posts, or LinkedIn information. According to an article titled “Is web crawling legal? ” it is important to note the rules before conduct web scraping:

Private data that requires username and passcodes can not be scrapped.

Compliance with the ToS (Terms of Service) which explicitly prohibits the action of web scraping.

Don’t copy data that is copyrighted.

One person can be prosecuted under several laws. For example, one scraped some confidential information and sold it to a third party disregarding the desist letter sent by the site owner. This person can be prosecuted under the law of Trespass to Chattel, Violation of the Digital Millennium Copyright Act (DMCA), Violation of the Computer Fraud and Abuse Act (CFAA) and Misappropriation.

It doesn’t mean that you can’t scrape social media channels like Twitter, Facebook, Instagram, and YouTube. They are friendly to scraping services that follow the provisions of the file. For Facebook, you need to get its written permission before conducting the behavior of automated data collection.

4. You need to know how to code

A web scraping tool (data extraction tool) is very useful regarding non-tech professionals like marketers, statisticians, financial consultant, bitcoin investors, researchers, journalists, etc. Octoparse launched a one of a kind feature – web scraping templates that are preformatted scrapers that cover over 14 categories on over 30 websites including Facebook, Twitter, Amazon, eBay, Instagram and more. All you have to do is to enter the keywords/URLs at the parameter without any complex task configuration. Web scraping with Python is time-consuming. On the other side, a web scraping template is efficient and convenient to capture the data you need.

5. You can use scraped data for anything

It is perfectly legal if you scrape data from websites for public consumption and use it for analysis. However, it is not legal if you scrape confidential information for profit. For example, scraping private contact information without permission, and sell them to a 3rd party for profit is illegal. Besides, repackaging scraped content as your own without citing the source is not ethical as well. You should follow the idea of no spamming, no plagiarism, or any fraudulent use of data is prohibited according to the law.

Check Below Video: 10 Myths About Web Scraping!

6. A web scraper is versatile

Maybe you’ve experienced particular websites that change their layouts or structure once in a while. Don’t get frustrated when you come across such websites that your scraper fails to read for the second time. There are many reasons. It isn’t necessarily triggered by identifying you as a suspicious bot. It also may be caused by different geo-locations or machine access. In these cases, it is normal for a web scraper to fail to parse the website before we set the adjustment.

Read this article: How to Scrape Websites Without Being Blocked in 5 Mins?

7. You can scrape at a fast speed

You may have seen scraper ads saying how speedy their crawlers are. It does sound good as they tell you they can collect data in seconds. However, you are the lawbreaker who will be prosecuted if damages are caused. It is because a scalable data request at a fast speed will overload a web server which might lead to a server crash. In this case, the person is responsible for the damage under the law of “trespass to chattels” law (Dryer and Stockton 2013). If you are not sure whether the website is scrapable or not, please ask the web scraping service provider. Octoparse is a responsible web scraping service provider who places clients’ satisfaction in the first place. It is crucial for Octoparse to help our clients get the problem solved and to be successful.

8. API and Web scraping are the same

API is like a channel to send your data request to a web server and get desired data. API will return the data in JSON format over the HTTP protocol. For example, Facebook API, Twitter API, and Instagram API. However, it doesn’t mean you can get any data you ask for. Web scraping can visualize the process as it allows you to interact with the websites. Octoparse has web scraping templates. It is even more convenient for non-tech professionals to extract data by filling out the parameters with keywords/URLs.

9. The scraped data only works for our business after being cleaned and analyzed

Many data integration platforms can help visualize and analyze the data. In comparison, it looks like data scraping doesn’t have a direct impact on business decision making. Web scraping indeed extracts raw data of the webpage that needs to be processed to gain insights like sentiment analysis. However, some raw data can be extremely valuable in the hands of gold miners.

With Octoparse Google Search web scraping template to search for an organic search result, you can extract information including the titles and meta descriptions about your competitors to determine your SEO strategies; For retail industries, web scraping can be used to monitor product pricing and distributions. For example, Amazon may crawl Flipkart and Walmart under the “Electronic” catalog to assess the performance of electronic items.

10. Web scraping can only be used in business

Web scraping is widely used in various fields besides lead generation, price monitoring, price tracking, market analysis for business. Students can also leverage a Google scholar web scraping template to conduct paper research. Realtors are able to conduct housing research and predict the housing market. You will be able to find Youtube influencers or Twitter evangelists to promote your brand or your own news aggregation that covers the only topics you want by scraping news media and RSS feeds.

Source:

Dryer, A. J., and Stockton, J. 2013. “Internet ‘Data Scraping’: A Primer for Counseling Clients, ” New York Law Journal. Retrieved from

Frequently Asked Questions about web scraping financial data

Where can I scrape financial data?

Besides, there are lots of financial data sources with up-to-date and valuable information you can scrape from, such as Google Finance, Bloomberg, CNNMoney, Morningstar, TMXMoney, etc.Jan 25, 2021

Is web scraping data legal?

It is perfectly legal if you scrape data from websites for public consumption and use it for analysis. However, it is not legal if you scrape confidential information for profit. For example, scraping private contact information without permission, and sell them to a 3rd party for profit is illegal.Aug 16, 2021

How do you scrape financial data in Python?

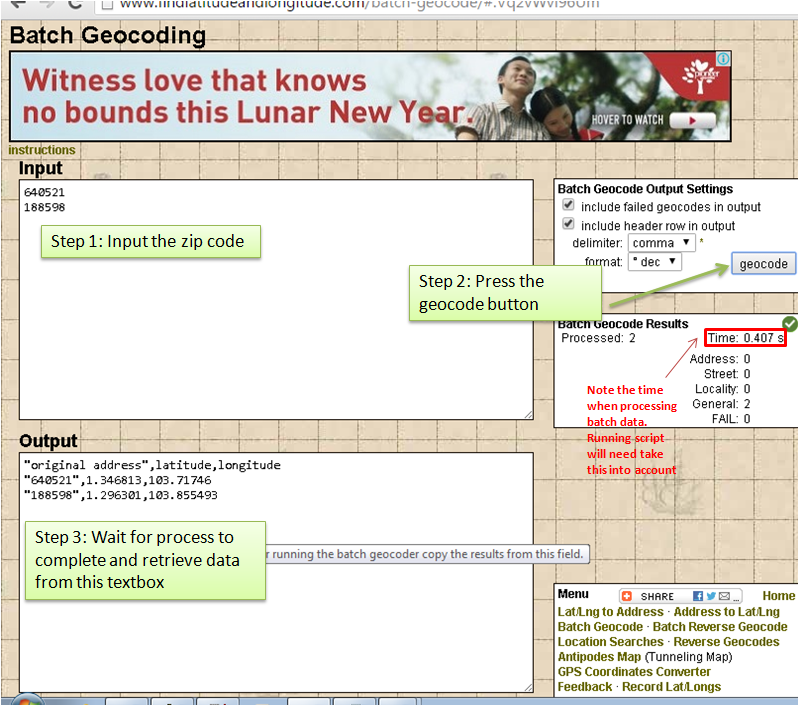

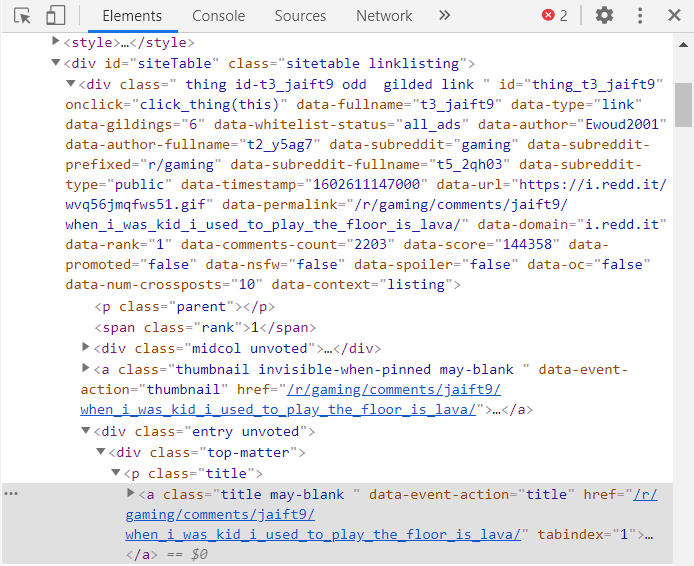

How to scrape Yahoo Finance and extract fundamental stock market data using Python, LXML, and PandasDisclaimers. … Prerequisites. … Find the ticker symbol. … Take a look at the Balance Sheet data that we’re going to scrape. … Inspect the page source. … Scrape some balance sheet data. … Reading the financial data. … Clean up the data.Jan 24, 2019