Web Scraper Tool Chrome Web Store

The Best Web Scraper Chrome Extensions

Are you looking for the best web scrapers available as Chrome extensions? Then come in now and check out our list tested and trusted Chrome-based web scrapers – the list contains both paid and free importance of web scraping cannot be overemphasized – within a few hours; you can convert a whole website with hundreds of thousands of pages into structured data that you need for your businesses or research thorough automated scrapers as the tool that makes web scraping possible, and there are many web scrapers you can get in the market. Some are paid while others are free. In terms of platform support, we can say that Chrome is one of the most popular platforms that get the attention of developers of web scrapers, and a good number of web scrapers have been developed for the Chrome platform as is the most popular web browser in the market right now, and the Chrome Web Store is host to over 180, 000 extensions with web scrapers being part of them. While a good number of them in the Chrome Web Store are free, it does not mean all of them are worthy of being used for any serious web scraping problem. It is because of this that this article has been written – to provide you recommendations on the best web scrapers available in the Chrome Web Use Web Scrapers Available as Chrome Extensions? There was a time that developers do not see Chrome extensions as software to be taken seriously. That time is long gone as more and more users of Chrome find extension helpful. Now, full-blown softwares are available as Chrome extensions and web scrapers are some of them. But why should you use them? They are lightweight, easy to develop, and as such, they are usually cheap, while some are even free. This then means that they are cost-effective compared to others developed as cloud-based platforms and installable apps on PCs. They are also 5 Web Scraper Chrome ExtensionsMake no mistake about it; web scraping can only be easy, fast, and stress-free only if you use the best web scrapers for your web scraping projects. Unfortunately, we have come to realize that a good number of web scrapers in the market are living on hypes, and as such, it is important we clear the air to prevent you from making the mistake of choosing the wrong tool for the job. Below are the 5 best web scrapers available as Chrome extensions that we have tried, and they prove to work quite ExtensionPricing: FreeFree Trials: Chrome version is completely freeData Output Format: is a web scraping tool provider with a Chrome browser extension and a Firefox add-on. The Chrome extension is one of the best web scrapers you can install as a Chrome extension. With over 300, 000 downloads – and impressive customer reviews in the store, this extension is a must-have for web scrapers. With this tool, you can extract data from any website of your choice in an easy and swift manner. It requests no coding skills but presents a point and click interface for training the tool on the data to be extracted. Its only dependency is having a Chrome browser installed on your Data ScraperPricing: Starts at $19. 99 per monthFree Trials: 500 pages per monthData Output Format: CSV, ExcelData Miner Chrome extension remains free for you provided you wouldn’t be scraping more than 500 pages in a month -anything more than that, and you will have to opt-inn for their paid plans. Data Miner extension requires no coding to use, and it is perfectly made for absolute beginners as it requires just clicks to scrape. Currently, this extension is available for 15, 000+ website. It is important you know that Data Miner does not behave like a bot as a regular user, and as such, you do not have to worry about blocks. Data Miner automates form filling, scrapes tables with just a click, and automatically go from page to page when pagination is raperPricing: Completely freeFree Trials: FreeData Output Format: CSV, Excel TXTScraper is fairly unpopular when compared with the two web scrapers discussed above – it does not even have a website of its own. However, it works quite great and can extract data out of web pages and convert them into spreadsheets. This web scraper is quite simple but comes with some limitations and is free to use. The major problem associated with Scraper is that it is not beginner-friendly. The usage of Scraper requires someone to be comfortable working with XPath, and as such, it is wise to say it is for intermediate and advanced Starts at $49 per monthFree Trials: 50 requests monthlyData Output Format: TXT, CSV, is a web scraping tool available as a Chrome extension. Unlike the others described above, web scraping tools are very much specialized and tailored towards crawling web pages in search of email addresses. With, you can find the email address of any professional or even scrape all the email addresses associated with a specific domain name. It also has an email verifier that you can use to verify the deliverability of any email address. Interestingly, over 2 million professionals are making use of this more: Email Scraping Tools: Web email scraping services and Software (Email Extractor)Agenty Scraping AgentPricing: FreeFree Trials: 14 days free trial – 100 pages creditData Output Format: Google spreadsheet, CSV, ExcelThe Agenty Scraping Agent is not a free tool and requires you to make a monetary commitment – but has a free trial option for a test. The Agenty Scraping Agent can be installed as a Chrome extension. It presents a point and click interface for training the agent on the data required. It facilitates anonymous web scraping through the use of highly anonymous proxies and automatic IP rotation. It supports batch URL crawling and even crawls websites that require a login and JavaScript-heavy websites. It keeps a history of your crawling activities and can be integrated with a good number of tools, including Google Spreadsheet, Amazon S3, and more, Web Scraping Software for data extraction Web Scraping API to Help Scrape & Extract DataPython web scraping frameworks and toolsHow to Scrape a Website and Never Get Blacklisted & BlockedConclusionLooking at the list above, you can see that developers are already taking Chrome as a serious platform, and we expect more web scrapers to join this list. Web scrapers available as Chrome extensions are light, easy to use, and comes with free plans perfect for small web scraping projects. They are also cross-platform and works in a browser environment, which makes them perfect for web scraping.

How to scrape data with Chrome extensions | by WrekinData | Medium

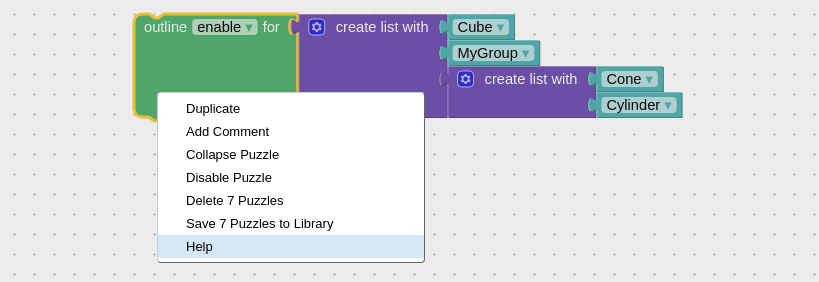

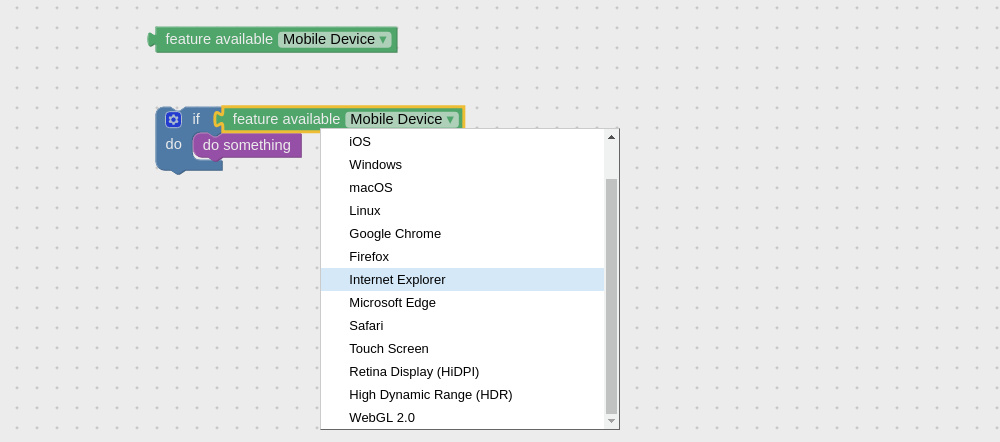

When it comes to data scraping, there are plenty of solutions that can give you the kind of results you are looking for. One that is quite often overlooked, however, is simply leveraging your, we will take a look at how you can utilise Chrome’s extension functionalities to scrape data of any would you want to do this? The main reason why someone would opt for using a Chrome extensions for web scraping is a limited budget. Of course, you may have a multitude of other reasons too. It’s a great option for those who:● Are inexperienced in data scraping and would like to get a taste before investing further. ● Would like a simple, no-frills scraping tool without having to spend time and effort in software that can be very technical. ● Have no need for fully-fledged data scraping as their needs can be met with something much simpler instead. ● Need to figure out whether or not data scraping fits their own business model and how they can integrate it these and many other reasons, utilising an extension can be an excellent introduction to data scraping and may even serve as a complete package for by doing your researchAs is usually the case with DIY tech solutions, there is no single option that can fulfill your every requirement. As you might expect, there are plenty of data scraping extensions that vary in functionality, usability, and result of the things that’s common in many of these extensions is the fact that they assume a basic working knowledge of HTML and is because they mainly use HTML and CSS selectors to understand and scrape the data that you want and may need you to be able to customise their selections to suit your examples of good scraping tools (in no particular order):● Agenty● Web Scraper● Data ScraperHow do these data scrapers work? While this will often depend on the exact extension you choose, they all share common features as they utilise the same kind of web-based features to extract begin with, you will need to install the extension from the Chrome Web Store. After that, you should see a new icon in the top-right hand corner of on that button and you will see the extension’s working interface. Some extensions, like Web Scraper, integrate directly in Chrome’s Developer Tools which can be easily found by pressing Ctrl + Shift + I or just by hitting the F12 you can see the user interface, you are almost ready to go. Like all other similar software, Chrome-based data scrapers operate on a click-to-scrape function so that you can quickly gather required most common output file is CSV which you can then open via any compatible software such as Microsoft Excel or Google’s own Sheets so you never have to leave your scraping has never been easierAfter trying your first data scraping extension, you will quickly come to realise that you don’t always need complicated tools to extract data from a time where knowing your competition and understanding big data has never been more important, we are lucky enough that these processes have never been easier either.

Search engine scraping – Wikipedia

Search engine scraping is the process of harvesting URLs, descriptions, or other information from search engines such as Google, Bing, Yahoo, Petal or Sogou. This is a specific form of screen scraping or web scraping dedicated to search engines only.

Most commonly larger search engine optimization (SEO) providers depend on regularly scraping keywords from search engines, especially Google, Petal, Sogou to monitor the competitive position of their customers’ websites for relevant keywords or their indexing status.

Search engines like Google have implemented various forms of human detection to block any sort of automated access to their service, [1] in the intent of driving the users of scrapers towards buying their official APIs instead.

The process of entering a website and extracting data in an automated fashion is also often called “crawling”. Search engines like Google, Bing, Yahoo, Petal or Sogou get almost all their data from automated crawling bots.

Difficulties[edit]

Google is the by far largest search engine with most users in numbers as well as most revenue in creative advertisements, which makes Google the most important search engine to scrape for SEO related companies. [2]

Although Google does not take legal action against scraping, it uses a range of defensive methods that makes scraping their results a challenging task, even when the scraping tool is realistically spoofing a normal web browser:

Google is using a complex system of request rate limitation which can vary for each language, country, User-Agent as well as depending on the keywords or search parameters. The rate limitation can make it unpredictable when accessing a search engine automated as the behaviour patterns are not known to the outside developer or user.

Network and IP limitations are as well part of the scraping defense systems. Search engines can not easily be tricked by changing to another IP, while using proxies is a very important part in successful scraping. The diversity and abusive history of an IP is important as well.

Offending IPs and offending IP networks can easily be stored in a blacklist database to detect offenders much faster. The fact that most ISPs give dynamic IP addresses to customers requires that such automated bans be only temporary, to not block innocent users.

Behaviour based detection is the most difficult defense system. Search engines serve their pages to millions of users every day, this provides a large amount of behaviour information. A scraping script or bot is not behaving like a real user, aside from having non-typical access times, delays and session times the keywords being harvested might be related to each other or include unusual parameters. Google for example has a very sophisticated behaviour analyzation system, possibly using deep learning software to detect unusual patterns of access. It can detect unusual activity much faster than other search engines. [3]

HTML markup changes, depending on the methods used to harvest the content of a website even a small change in HTML data can render a scraping tool broken until it is updated.

General changes in detection systems. In the past years search engines have tightened their detection systems nearly month by month making it more and more difficult to reliable scrape as the developers need to experiment and adapt their code regularly. [4]

Detection[edit]

When search engine defense thinks an access might be automated the search engine can react differently.

The first layer of defense is a captcha page[5] where the user is prompted to verify they are a real person and not a bot or tool. Solving the captcha will create a cookie that permits access to the search engine again for a while. After about one day the captcha page is removed again.

The second layer of defense is a similar error page but without captcha, in such a case the user is completely blocked from using the search engine until the temporary block is lifted or the user changes their IP.

The third layer of defense is a long-term block of the entire network segment. Google has blocked large network blocks for months. This sort of block is likely triggered by an administrator and only happens if a scraping tool is sending a very high number of requests.

All these forms of detection may also happen to a normal user, especially users sharing the same IP address or network class (IPV4 ranges as well as IPv6 ranges).

Methods of scraping Google, Bing, Yahoo, Petal or Sogou[edit]

To scrape a search engine successfully the two major factors are time and amount.

The more keywords a user needs to scrape and the smaller the time for the job the more difficult scraping will be and the more developed a scraping script or tool needs to be.

Scraping scripts need to overcome a few technical challenges:[6]

IP rotation using Proxies (proxies should be unshared and not listed in blacklists)

Proper time management, time between keyword changes, pagination as well as correctly placed delays Effective longterm scraping rates can vary from only 3–5 requests (keywords or pages) per hour up to 100 and more per hour for each IP address / Proxy in use. The quality of IPs, methods of scraping, keywords requested and language/country requested can greatly affect the possible maximum rate.

Correct handling of URL parameters, cookies as well as HTTP headers to emulate a user with a typical browser[7]

HTML DOM parsing (extracting URLs, descriptions, ranking position, sitelinks and other relevant data from the HTML code)

Error handling, automated reaction on captcha or block pages and other unusual responses[8]

Captcha definition explained as mentioned above by[9]

An example of an open source scraping software which makes use of the above mentioned techniques is GoogleScraper. [7] This framework controls browsers over the DevTools Protocol and makes it hard for Google to detect that the browser is automated.

Programming languages[edit]

When developing a scraper for a search engine almost any programming language can be used. Although, depending on performance requirements, some languages will be favorable.

PHP is a commonly used language to write scraping scripts for websites or backend services, since it has powerful capabilities built-in (DOM parsers, libcURL); however, its memory usage is typically 10 times the factor of a similar C/C++ code. Ruby on Rails as well as Python are also frequently used to automated scraping jobs. For highest performance, C++ DOM parsers should be considered.

Additionally, bash scripting can be used together with cURL as a command line tool to scrape a search engine.

Tools and scripts[edit]

When developing a search engine scraper there are several existing tools and libraries available that can either be used, extended or just analyzed to learn from.

iMacros – A free browser automation toolkit that can be used for very small volume scraping from within a users browser [10]

cURL – a command line browser for automation and testing as well as a powerful open source HTTP interaction library available for a large range of programming languages. [11]

google-search – A Go package to scrape Google. [12]

SEO Tools Kit – Free Online Tools, Duckduckgo, Baidu, Petal, Sogou) by using proxies (socks4/5, proxy). The tool includes asynchronous networking support and is able to control real browsers to mitigate detection. [13]

se-scraper – Successor of SEO Tools Kit. Scrape search engines concurrently with different proxies. [14]

Legal[edit]

When scraping websites and services the legal part is often a big concern for companies, for web scraping it greatly depends on the country a scraping user/company is from as well as which data or website is being scraped. With many different court rulings all over the world. [15][16][17]

However, when it comes to scraping search engines the situation is different, search engines usually do not list intellectual property as they just repeat or summarize information they scraped from other websites.

The largest public known incident of a search engine being scraped happened in 2011 when Microsoft was caught scraping unknown keywords from Google for their own, rather new Bing service, [18] but even this incident did not result in a court case.

One possible reason might be that search engines like Google, Petal, Sogou are getting almost all their data by scraping millions of public reachable websites, also without reading and accepting those terms.

See also[edit]

Comparison of HTML parsers

References[edit]

^ “Automated queries – Search Console Help”. Retrieved 2017-04-02.

^ “Google Still World’s Most Popular Search Engine By Far, But Share Of Unique Searchers Dips Slightly”. 11 February 2013.

^ “Does Google know that I am using Tor Browser? “.

^ “Google Groups”.

^ “My computer is sending automated queries – reCAPTCHA Help”. Retrieved 2017-04-02.

^ “Scraping Google Ranks for Fun and Profit”.

^ a b “Python3 framework GoogleScraper”. scrapeulous.

^ Deniel Iblika (3 January 2018). “De Online Marketing Diensten van DoubleSmart”. DoubleSmart (in Dutch). Diensten. Retrieved 16 January 2019.

^ Jan Janssen (26 September 2019). “Online Marketing Services van SEO SNEL”. SEO SNEL (in Dutch). Services. Retrieved 26 September 2019.

^ “iMacros to extract google results”. Retrieved 2017-04-04.

^ “libcurl – the multiprotocol file transfer library”.

^ “A Go package to scrape Google” – via GitHub.

^ “Free online SEO Tools (like Google, Yandex, Bing, Duckduckgo,… ). Including asynchronous networking support. : NikolaiT/SEO Tools Kit”. 15 January 2019 – via GitHub.

^ Tschacher, Nikolai (2020-11-17), NikolaiT/se-scraper, retrieved 2020-11-19

^ “Is Web Scraping Legal? “. Icreon (blog).

^ “Appeals court reverses hacker/troll “weev” conviction and sentence [Updated]”.

^ “Can Scraping Non-Infringing Content Become Copyright Infringement… Because Of How Scrapers Work? “.

^ Singel, Ryan. “Google Catches Bing Copying; Microsoft Says ‘So What? ‘”. Wired.

External links[edit]

Scrapy Open source python framework, not dedicated to search engine scraping but regularly used as base and with a large number of users.

Compunect scraping sourcecode – A range of well known open source PHP scraping scripts including a regularly maintained Google Search scraper for scraping advertisements and organic resultpages.

Justone free scraping scripts – Information about Google scraping as well as open source PHP scripts (last updated mid 2016)

rvices source code – Python and PHP open source classes for a 3rd party scraping API. (updated January 2017, free for private use)

PHP Simpledom A widespread open source PHP DOM parser to interpret HTML code into variables.

SerpApi Third party service based in the United States allowing you to scrape search engines legally.

Frequently Asked Questions about web scraper tool chrome web store

How do you scrape a website in Chrome?

Install the extension and open the Web Scraper tab in developer tools (which has to be placed at the bottom of the screen); 2. Create a new sitemap; 3. Add data extraction selectors to the sitemap; 4. Lastly, launch the scraper and export scraped data.Aug 25, 2021

How do I scrape data in Chrome extensions?

Some extensions, like Web Scraper, integrate directly in Chrome’s Developer Tools which can be easily found by pressing Ctrl + Shift + I or just by hitting the F12 button. Once you can see the user interface, you are almost ready to go.

How can I scrape my website fast?

Web Socket: The Fastest Way To Scrape WebsitesCheck whether the website provides RESTful API, if so just use RESTful API, if not continue to the next step.Inspect HTML elements that you want to scrape.Maybe try simple Request to get the elements.Success, hell yeah!If not, maybe try another CSS, XPath and etc.More items…•Jul 15, 2019