Parse Html With Python

html.parser — Simple HTML and XHTML parser — Python …

Source code: Lib/html/

This module defines a class HTMLParser which serves as the basis for

parsing text files formatted in HTML (HyperText Mark-up Language) and XHTML.

class (*, convert_charrefs=True)¶

Create a parser instance able to parse invalid markup.

If convert_charrefs is True (the default), all character

references (except the ones in script/style elements) are

automatically converted to the corresponding Unicode characters.

An HTMLParser instance is fed HTML data and calls handler methods

when start tags, end tags, text, comments, and other markup elements are

encountered. The user should subclass HTMLParser and override its

methods to implement the desired behavior.

This parser does not check that end tags match start tags or call the end-tag

handler for elements which are closed implicitly by closing an outer element.

Changed in version 3. 4: convert_charrefs keyword argument added.

Changed in version 3. 5: The default value for argument convert_charrefs is now True.

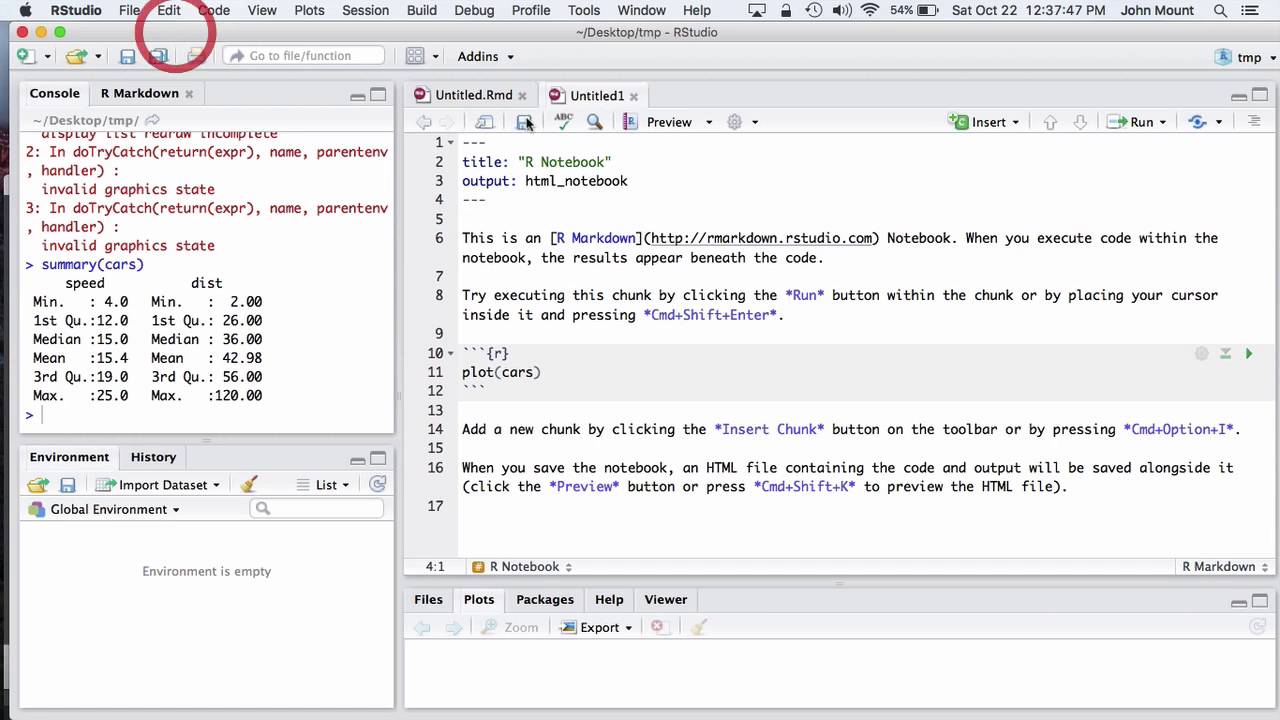

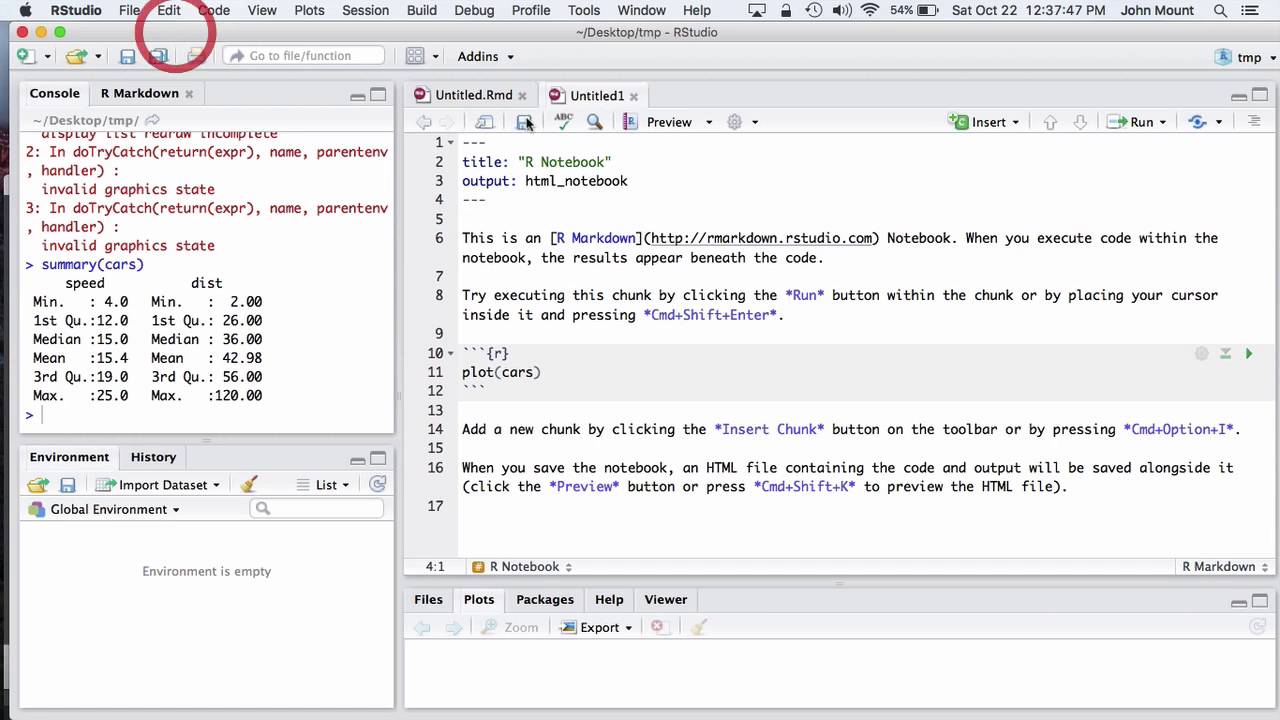

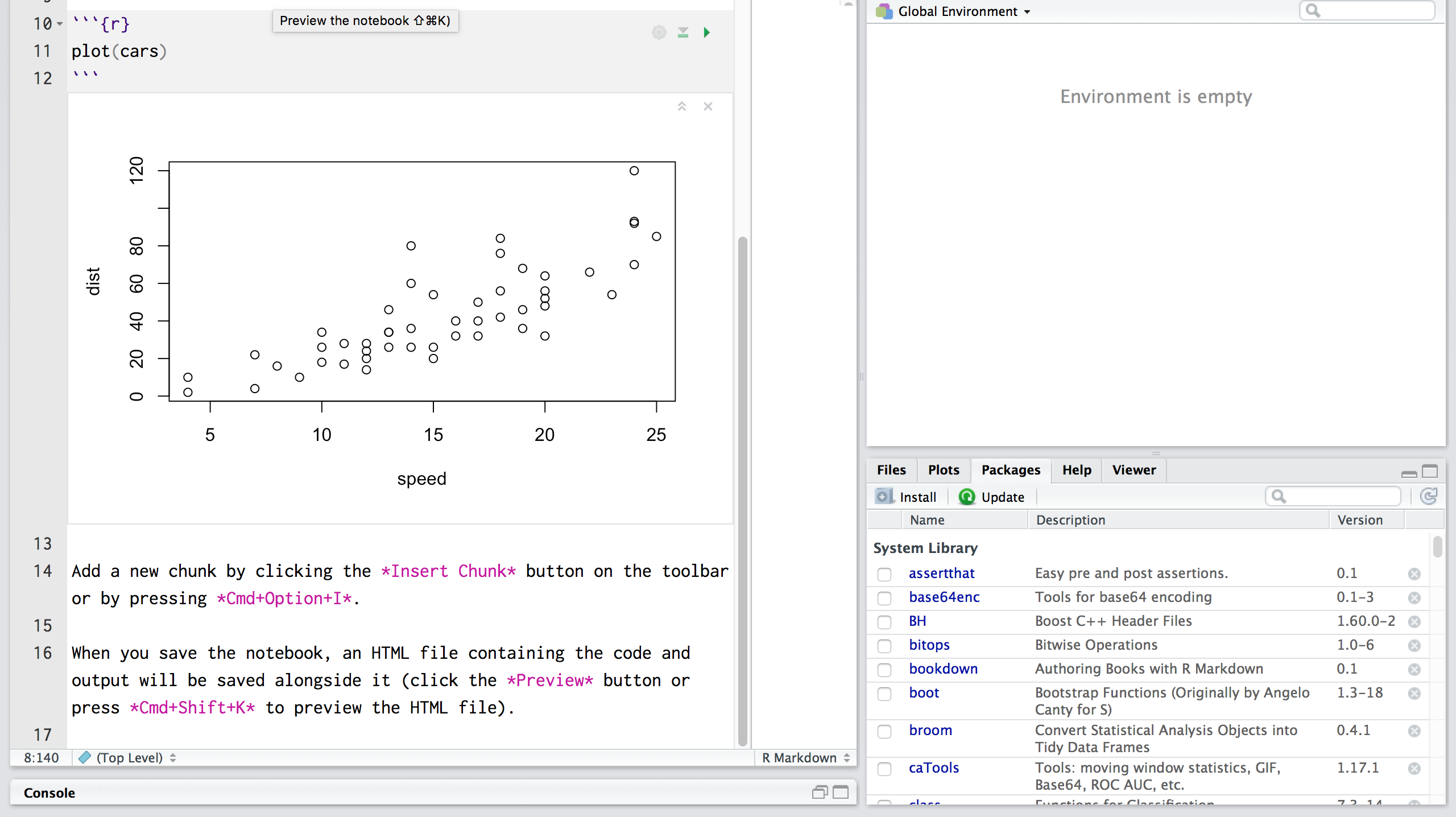

Example HTML Parser Application¶

As a basic example, below is a simple HTML parser that uses the

HTMLParser class to print out start tags, end tags, and data

as they are encountered:

from import HTMLParser

class MyHTMLParser(HTMLParser):

def handle_starttag(self, tag, attrs):

print(“Encountered a start tag:”, tag)

def handle_endtag(self, tag):

print(“Encountered an end tag:”, tag)

def handle_data(self, data):

print(“Encountered some data:”, data)

parser = MyHTMLParser()

(‘

‘

Parse me!

‘)

The output will then be:

Encountered a start tag: html

Encountered a start tag: head

Encountered a start tag: title

Encountered some data: Test

Encountered an end tag: title

Encountered an end tag: head

Encountered a start tag: body

Encountered a start tag: h1

Encountered some data: Parse me!

Encountered an end tag: h1

Encountered an end tag: body

Encountered an end tag: html

HTMLParser Methods¶

HTMLParser instances have the following methods:

(data)¶

Feed some text to the parser. It is processed insofar as it consists of

complete elements; incomplete data is buffered until more data is fed or

close() is called. data must be str.

()¶

Force processing of all buffered data as if it were followed by an end-of-file

mark. This method may be redefined by a derived class to define additional

processing at the end of the input, but the redefined version should always call

the HTMLParser base class method close().

Reset the instance. Loses all unprocessed data. This is called implicitly at

instantiation time.

Return current line number and offset.

t_starttag_text()¶

Return the text of the most recently opened start tag. This should not normally

be needed for structured processing, but may be useful in dealing with HTML “as

deployed” or for re-generating input with minimal changes (whitespace between

attributes can be preserved, etc. ).

The following methods are called when data or markup elements are encountered

and they are meant to be overridden in a subclass. The base class

implementations do nothing (except for handle_startendtag()):

HTMLParser. handle_starttag(tag, attrs)¶

This method is called to handle the start of a tag (e. g.

The tag argument is the name of the tag converted to lower case. The attrs

argument is a list of (name, value) pairs containing the attributes found

inside the tag’s <> brackets. The name will be translated to lower case,

and quotes in the value have been removed, and character and entity references

have been replaced.

For instance, for the tag ‘)

Decl: DOCTYPE HTML PUBLIC “-//W3C//DTD HTML 4. 01//EN” ”

Parsing an element with a few attributes and a title:

>>> (‘

Start tag: img

attr: (‘src’, ”)

attr: (‘alt’, ‘The Python logo’)

>>>

>>> (‘

Python

‘)

Start tag: h1

Data: Python

End tag: h1

The content of script and style elements is returned as is, without

further parsing:

>>> (‘

‘)

Start tag: style

attr: (‘type’, ‘text/css’)

Data: #python { color: green}

End tag: style

>>> (‘‘)

Start tag: script

attr: (‘type’, ‘text/javascript’)

Data: alert(“hello! “);

End tag: script

Parsing comments:

>>> (‘‘… ‘IE-specific content‘)

Comment: a comment

Comment: [if IE 9]>IE-specific content‘):

>>> (‘>>>’)

Named ent: >

Num ent: >

Feeding incomplete chunks to feed() works, but

handle_data() might be called more than once

(unless convert_charrefs is set to True):

>>> for chunk in [‘

Start tag: span

Data: buff

Data: ered

Data: text

End tag: span

Parsing invalid HTML (e. unquoted attributes) also works:

>>> (‘

Start tag: p

Start tag: a

attr: (‘class’, ‘link’)

attr: (‘href’, ‘#main’)

Data: tag soup

End tag: p

End tag: a

Web Scraping and Parsing HTML in Python with Beautiful Soup

The internet has an amazingly wide variety of information for human consumption. But this data is often difficult to access programmatically if it doesn’t come in the form of a dedicated REST API. With Python tools like Beautiful Soup, you can scrape and parse this data directly from web pages to use for your projects and applications.

Let’s use the example of scraping MIDI data from the internet to train a neural network with Magenta that can generate classic Nintendo-sounding music. In order to do this, we’ll need a set of MIDI music from old Nintendo games. Using Beautiful Soup we can get this data from the Video Game Music Archive.

Getting started and setting up dependencies

Before moving on, you will need to make sure you have an up to date version of Python 3 and pip installed. Make sure you create and activate a virtual environment before installing any dependencies.

You’ll need to install the Requests library for making HTTP requests to get data from the web page, and Beautiful Soup for parsing through the HTML.

With your virtual environment activated, run the following command in your terminal:

pip install requests==2. 22. 0 beautifulsoup4==4. 8. 1

We’re using Beautiful Soup 4 because it’s the latest version and Beautiful Soup 3 is no longer being developed or supported.

Using Requests to scrape data for Beautiful Soup to parse

First let’s write some code to grab the HTML from the web page, and look at how we can start parsing through it. The following code will send a GET request to the web page we want, and create a BeautifulSoup object with the HTML from that page:

import requests

from bs4 import BeautifulSoup

vgm_url = ”

html_text = (vgm_url)

soup = BeautifulSoup(html_text, ”)

With this soup object, you can navigate and search through the HTML for data that you want. For example, if you run after the previous code in a Python shell you’ll get the title of the web page. If you run print(t_text()), you will see all of the text on the page.

Getting familiar with Beautiful Soup

The find() and find_all() methods are among the most powerful weapons in your arsenal. () is great for cases where you know there is only one element you’re looking for, such as the body tag. On this page, (id=’banner_ad’) will get you the text from the HTML element for the banner advertisement.

nd_all() is the most common method you will be using in your web scraping adventures. Using this you can iterate through all of the hyperlinks on the page and print their URLs:

for link in nd_all(‘a’):

print((‘href’))

You can also provide different arguments to find_all, such as regular expressions or tag attributes to filter your search as specifically as you want. You can find lots of cool features in the documentation.

Parsing and navigating HTML with BeautifulSoup

Before writing more code to parse the content that we want, let’s first take a look at the HTML that’s rendered by the browser. Every web page is different, and sometimes getting the right data out of them requires a bit of creativity, pattern recognition, and experimentation.

Our goal is to download a bunch of MIDI files, but there are a lot of duplicate tracks on this webpage as well as remixes of songs. We only want one of each song, and because we ultimately want to use this data to train a neural network to generate accurate Nintendo music, we won’t want to train it on user-created remixes.

When you’re writing code to parse through a web page, it’s usually helpful to use the developer tools available to you in most modern browsers. If you right-click on the element you’re interested in, you can inspect the HTML behind that element to figure out how you can programmatically access the data you want.

Let’s use the find_all method to go through all of the links on the page, but use regular expressions to filter through them so we are only getting links that contain MIDI files whose text has no parentheses, which will allow us to exclude all of the duplicates and remixes.

Create a file called and add the following code to it:

import re

if __name__ == ‘__main__’:

attrs = {

‘href’: mpile(r’\$’)}

tracks = nd_all(‘a’, attrs=attrs, mpile(r’^((?! \(). )*$’))

count = 0

for track in tracks:

print(track)

count += 1

print(len(tracks))

This will filter through all of the MIDI files that we want on the page, print out the link tag corresponding to them, and then print how many files we filtered.

Run the code in your terminal with the command python

Downloading the MIDI files we want from the webpage

Now that we have working code to iterate through every MIDI file that we want, we have to write code to download all of them.

In, add a function to your code called download_track, and call that function for each track in the loop iterating through them:

def download_track(count, track_element):

# Get the title of the track from the HTML element

track_title = (). replace(‘/’, ‘-‘)

download_url = ‘{}{}'(vgm_url, track_element[‘href’])

file_name = ‘{}_{}'(count, track_title)

# Download the track

r = (download_url, allow_redirects=True)

with open(file_name, ‘wb’) as f:

(ntent)

# Print to the console to keep track of how the scraping is coming along.

print(‘Downloaded: {}'(track_title, download_url))

download_track(count, track)

In this download_track function, we’re passing the Beautiful Soup object representing the HTML element of the link to the MIDI file, along with a unique number to use in the filename to avoid possible naming collisions.

Run this code from a directory where you want to save all of the MIDI files, and watch your terminal screen display all 2230 MIDIs that you downloaded (at the time of writing this). This is just one specific practical example of what you can do with Beautiful Soup.

The vast expanse of the World Wide Web

Now that you can programmatically grab things from web pages, you have access to a huge source of data for whatever your projects need. One thing to keep in mind is that changes to a web page’s HTML might break your code, so make sure to keep everything up to date if you’re building applications on top of this.

If you’re looking for something to do with the data you just grabbed from the Video Game Music Archive, you can try using Python libraries like Mido to work with MIDI data to clean it up, or use Magenta to train a neural network with it or have fun building a phone number people can call to hear Nintendo music.

I’m looking forward to seeing what you build. Feel free to reach out and share your experiences or ask any questions.

Email:

Twitter: @Sagnewshreds

Github: Sagnew

Twitch (streaming live code): Sagnewshreds

Guide to Parsing HTML with BeautifulSoup in Python – Stack …

Introduction

Web scraping is programmatically collecting information from various websites. While there are many libraries and frameworks in various languages that can extract web data, Python has long been a popular choice because of its plethora of options for web scraping.

This article will give you a crash course on web scraping in Python with Beautiful Soup – a popular Python library for parsing HTML and XML.

Ethical Web Scraping

Web scraping is ubiquitous and gives us data as we would get with an API. However, as good citizens of the internet, it’s our responsibility to respect the site owners we scrape from. Here are some principles that a web scraper should adhere to:

Don’t claim scraped content as our own. Website owners sometimes spend a lengthy amount of time creating articles, collecting details about products or harvesting other content. We must respect their labor and originality.

Don’t scrape a website that doesn’t want to be scraped. Websites sometimes come with a file – which defines the parts of a website that can be scraped. Many websites also have a Terms of Use which may not allow scraping. We must respect websites that do not want to be scraped.

Is there an API available already? Splendid, there’s no need for us to write a scraper. APIs are created to provide access to data in a controlled way as defined by the owners of the data. We prefer to use APIs if they’re available.

Making requests to a website can cause a toll on a website’s performance. A web scraper that makes too many requests can be as debilitating as a DDOS attack. We must scrape responsibly so we won’t cause any disruption to the regular functioning of the website.

An Overview of Beautiful Soup

The HTML content of the webpages can be parsed and scraped with Beautiful Soup. In the following section, we will be covering those functions that are useful for scraping webpages.

What makes Beautiful Soup so useful is the myriad functions it provides to extract data from HTML. This image below illustrates some of the functions we can use:

Let’s get hands-on and see how we can parse HTML with Beautiful Soup. Consider the following HTML page saved to file as

Body’s title

line ends

The following code snippets are tested on Ubuntu 20. 04. 1 LTS. You can install the BeautifulSoup module by typing the following command in the terminal:

$ pip3 install beautifulsoup4

The HTML file needs to be prepared. This is done by passing the file to the BeautifulSoup constructor, let’s use the interactive Python shell for this, so we can instantly print the contents of a specific part of a page:

from bs4 import BeautifulSoup

with open(“”) as fp:

soup = BeautifulSoup(fp, “”)

Now we can use Beautiful Soup to navigate our website and extract data.

Navigating to Specific Tags

From the soup object created in the previous section, let’s get the title tag of

# returns

Here’s a breakdown of each component we used to get the title:

Beautiful Soup is powerful because our Python objects match the nested structure of the HTML document we are scraping.

To get the text of the first tag, enter this:

# returns ‘1’

To get the title within the HTML’s body tag (denoted by the “title” class), type the following in your terminal:

# returns Body’s title

For deeply nested HTML documents, navigation could quickly become tedious. Luckily, Beautiful Soup comes with a search function so we don’t have to navigate to retrieve HTML elements.

Searching the Elements of Tags

The find_all() method takes an HTML tag as a string argument and returns the list of elements that match with the provided tag. For example, if we want all a tags in

nd_all(“a”)

We’ll see this list of a tags as output:

[1, 2, 3]

Here’s a breakdown of each component we used to search for a tag:

We can search for tags of a specific class as well by providing the class_ argument. Beautiful Soup uses class_ because class is a reserved keyword in Python. Let’s search for all a tags that have the “element” class:

nd_all(“a”, class_=”element”)

As we only have two links with the “element” class, you’ll see this output:

[1, 2]

What if we wanted to fetch the links embedded inside the a tags? Let’s retrieve a link’s href attribute using the find() option. It works just like find_all() but it returns the first matching element instead of a list. Type this in your shell:

(“a”, href=True)[“href”] # returns

The find() and find_all() functions also accept a regular expression instead of a string. Behind the scenes, the text will be filtered using the compiled regular expression’s search() method. For example:

import re

for tag in nd_all(mpile(“^b”)):

print(tag)

The list upon iteration, fetches the tags starting with the character b which includes and :

1

2

3

Body’s title

Check out our hands-on, practical guide to learning Git, with best-practices, industry-accepted standards, and included cheat sheet. Stop Googling Git commands and actually learn it! We’ve covered the most popular ways to get tags and their attributes. Sometimes, especially for less dynamic web pages, we just want the text from it. Let’s see how we can get it!

Getting the Whole Text

The get_text() function retrieves all the text from the HTML document. Let’s get all the text of the HTML document:

t_text()

Your output should be like this:

Head’s title

Body’s title

line begins

1

2

3

line ends

Sometimes the newline characters are printed, so your output may look like this as well:

“\n\nHead’s title\n\n\nBody’s title\nline begins\n 1\n2\n3\n line ends\n\n”

Now that we have a feel for how to use Beautiful Soup, let’s scrape a website!

Beautiful Soup in Action – Scraping a Book List

Now that we have mastered the components of Beautiful Soup, it’s time to put our learning to use. Let’s build a scraper to extract data from and save it to a CSV file. The site contains random data about books and is a great space to test out your web scraping techniques.

First, create a new file called Let’s import all the libraries we need for this script:

import requests

import time

import csv

In the modules mentioned above:

requests – performs the URL request and fetches the website’s HTML

time – limits how many times we scrape the page at once

csv – helps us export our scraped data to a CSV file

re – allows us to write regular expressions that will come in handy for picking text based on its pattern

bs4 – yours truly, the scraping module to parse the HTML

You would have bs4 already installed, and time, csv, and re are built-in packages in Python. You’ll need to install the requests module directly like this:

$ pip3 install requests

Before you begin, you need to understand how the webpage’s HTML is structured. In your browser, let’s go to. Then right-click on the components of the webpage to be scraped, and click on the inspect button to understand the hierarchy of the tags as shown below.

This will show you the underlying HTML for what you’re inspecting. The following picture illustrates these steps:

From inspecting the HTML, we learn how to access the URL of the book, the cover image, the title, the rating, the price, and more fields from the HTML. Let’s write a function that scrapes a book item and extract its data:

def scrape(source_url, soup): # Takes the driver and the subdomain for concats as params

# Find the elements of the article tag

books = nd_all(“article”, class_=”product_pod”)

# Iterate over each book article tag

for each_book in books:

info_url = source_url+”/”(“a”)[“href”]

cover_url = source_url+”/catalogue” + \

[“src”]. replace(“.. “, “”)

title = (“a”)[“title”]

rating = (“p”, class_=”star-rating”)[“class”][1]

# can also be written as: (“a”)(“title”)

price = (“p”, class_=”price_color”)()(

“ascii”, “ignore”)(“ascii”)

availability = (

“p”, class_=”instock availability”)()

# Invoke the write_to_csv function

write_to_csv([info_url, cover_url, title, rating, price, availability])

The last line of the above snippet points to a function to write the list of scraped strings to a CSV file. Let’s add that function now:

def write_to_csv(list_input):

# The scraped info will be written to a CSV here.

try:

with open(“”, “a”) as fopen: # Open the csv file.

csv_writer = (fopen)

csv_writer. writerow(list_input)

except:

return False

As we have a function that can scrape a page and export to CSV, we want another function that crawls through the paginated website, collecting book data on each page.

To do this, let’s look at the URL we are writing this scraper for:

”

The only varying element in the URL is the page number. We can format the URL dynamically so it becomes a seed URL:

“}”(str(page_number))

This string formatted URL with the page number can be fetched using the method (). We can then create a new BeautifulSoup object. Every time we get the soup object, the presence of the “next” button is checked so we could stop at the last page. We keep track of a counter for the page number that’s incremented by 1 after successfully scraping a page.

def browse_and_scrape(seed_url, page_number=1):

# Fetch the URL – We will be using this to append to images and info routes

url_pat = mpile(r”(. *\)”)

source_url = (seed_url)(0)

# Page_number from the argument gets formatted in the URL & Fetched

formatted_url = (str(page_number))

html_text = (formatted_url)

# Prepare the soup

soup = BeautifulSoup(html_text, “”)

print(f”Now Scraping – {formatted_url}”)

# This if clause stops the script when it hits an empty page

if (“li”, class_=”next”)! = None:

scrape(source_url, soup) # Invoke the scrape function

# Be a responsible citizen by waiting before you hit again

(3)

page_number += 1

# Recursively invoke the same function with the increment

browse_and_scrape(seed_url, page_number)

else:

scrape(source_url, soup) # The script exits here

return True

except Exception as e:

return e

The function above, browse_and_scrape(), is recursively called until the function (“li”, class_=”next”) returns None. At this point, the code will scrape the remaining part of the webpage and exit.

For the final piece to the puzzle, we initiate the scraping flow. We define the seed_url and call the browse_and_scrape() to get the data. This is done under the if __name__ == “__main__” block:

if __name__ == “__main__”:

seed_url = “}”

print(“Web scraping has begun”)

result = browse_and_scrape(seed_url)

if result == True:

print(“Web scraping is now complete! “)

print(f”Oops, That doesn’t seem right!!! – {result}”)

If you’d like to learn more about the if __name__ == “__main__” block, check out our guide on how it works.

You can execute the script as shown below in your terminal and get the output as:

$ python

Web scraping has begun

Now Scraping – Now Scraping – Now Scraping -…

Now Scraping – Now Scraping – Web scraping is now complete!

The scraped data can be found in the current working directory under the filename Here’s a sample the file’s content:

Light in the Attic, Three, 51. 77, In stock

the Velvet, One, 53. 74, In stock

stock

Good job! If you wanted to have a look at the scraper code as a whole, you can find it on GitHub.

Conclusion

In this tutorial, we learned the ethics of writing good web scrapers. We then used Beautiful Soup to extract data from an HTML file using the Beautiful Soup’s object properties, and it’s various methods like find(), find_all() and get_text(). We then built a scraper than retrieves a book list online and exports to CSV.

Web scraping is a useful skill that helps in various activities such as extracting data like an API, performing QA on a website, checking for broken URLs on a website, and more. What’s the next scraper you’re going to build?

Frequently Asked Questions about parse html with python

Examplefrom html. parser import HTMLParser.class Parser(HTMLParser):# method to append the start tag to the list start_tags.def handle_starttag(self, tag, attrs):global start_tags.start_tags. append(tag)# method to append the end tag to the list end_tags.def handle_endtag(self, tag):More items…

To extract data using web scraping with python, you need to follow these basic steps:Find the URL that you want to scrape.Inspecting the Page.Find the data you want to extract.Write the code.Run the code and extract the data.Store the data in the required format.Aug 9, 2021

Beautiful Soup (bs4) is a Python library that is used to parse information out of HTML or XML files. It parses its input into an object on which you can run a variety of searches.Jan 7, 2021