Parse Html Beautifulsoup

Guide to Parsing HTML with BeautifulSoup in Python – Stack …

Introduction

Web scraping is programmatically collecting information from various websites. While there are many libraries and frameworks in various languages that can extract web data, Python has long been a popular choice because of its plethora of options for web scraping.

This article will give you a crash course on web scraping in Python with Beautiful Soup – a popular Python library for parsing HTML and XML.

Ethical Web Scraping

Web scraping is ubiquitous and gives us data as we would get with an API. However, as good citizens of the internet, it’s our responsibility to respect the site owners we scrape from. Here are some principles that a web scraper should adhere to:

Don’t claim scraped content as our own. Website owners sometimes spend a lengthy amount of time creating articles, collecting details about products or harvesting other content. We must respect their labor and originality.

Don’t scrape a website that doesn’t want to be scraped. Websites sometimes come with a file – which defines the parts of a website that can be scraped. Many websites also have a Terms of Use which may not allow scraping. We must respect websites that do not want to be scraped.

Is there an API available already? Splendid, there’s no need for us to write a scraper. APIs are created to provide access to data in a controlled way as defined by the owners of the data. We prefer to use APIs if they’re available.

Making requests to a website can cause a toll on a website’s performance. A web scraper that makes too many requests can be as debilitating as a DDOS attack. We must scrape responsibly so we won’t cause any disruption to the regular functioning of the website.

An Overview of Beautiful Soup

The HTML content of the webpages can be parsed and scraped with Beautiful Soup. In the following section, we will be covering those functions that are useful for scraping webpages.

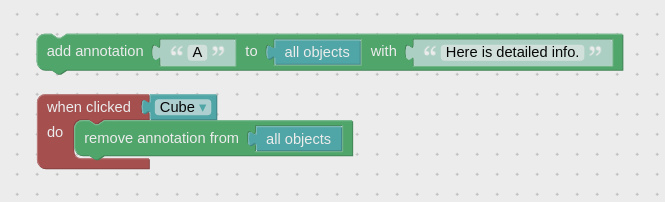

What makes Beautiful Soup so useful is the myriad functions it provides to extract data from HTML. This image below illustrates some of the functions we can use:

Let’s get hands-on and see how we can parse HTML with Beautiful Soup. Consider the following HTML page saved to file as

Body’s title

line ends

The following code snippets are tested on Ubuntu 20. 04. 1 LTS. You can install the BeautifulSoup module by typing the following command in the terminal:

$ pip3 install beautifulsoup4

The HTML file needs to be prepared. This is done by passing the file to the BeautifulSoup constructor, let’s use the interactive Python shell for this, so we can instantly print the contents of a specific part of a page:

from bs4 import BeautifulSoup

with open(“”) as fp:

soup = BeautifulSoup(fp, “”)

Now we can use Beautiful Soup to navigate our website and extract data.

Navigating to Specific Tags

From the soup object created in the previous section, let’s get the title tag of

# returns

Here’s a breakdown of each component we used to get the title:

Beautiful Soup is powerful because our Python objects match the nested structure of the HTML document we are scraping.

To get the text of the first tag, enter this:

# returns ‘1’

To get the title within the HTML’s body tag (denoted by the “title” class), type the following in your terminal:

# returns Body’s title

For deeply nested HTML documents, navigation could quickly become tedious. Luckily, Beautiful Soup comes with a search function so we don’t have to navigate to retrieve HTML elements.

Searching the Elements of Tags

The find_all() method takes an HTML tag as a string argument and returns the list of elements that match with the provided tag. For example, if we want all a tags in

nd_all(“a”)

We’ll see this list of a tags as output:

[1, 2, 3]

Here’s a breakdown of each component we used to search for a tag:

We can search for tags of a specific class as well by providing the class_ argument. Beautiful Soup uses class_ because class is a reserved keyword in Python. Let’s search for all a tags that have the “element” class:

nd_all(“a”, class_=”element”)

As we only have two links with the “element” class, you’ll see this output:

[1, 2]

What if we wanted to fetch the links embedded inside the a tags? Let’s retrieve a link’s href attribute using the find() option. It works just like find_all() but it returns the first matching element instead of a list. Type this in your shell:

(“a”, href=True)[“href”] # returns

The find() and find_all() functions also accept a regular expression instead of a string. Behind the scenes, the text will be filtered using the compiled regular expression’s search() method. For example:

import re

for tag in nd_all(mpile(“^b”)):

print(tag)

The list upon iteration, fetches the tags starting with the character b which includes and :

1

2

3

Body’s title

Check out our hands-on, practical guide to learning Git, with best-practices, industry-accepted standards, and included cheat sheet. Stop Googling Git commands and actually learn it! We’ve covered the most popular ways to get tags and their attributes. Sometimes, especially for less dynamic web pages, we just want the text from it. Let’s see how we can get it!

Getting the Whole Text

The get_text() function retrieves all the text from the HTML document. Let’s get all the text of the HTML document:

t_text()

Your output should be like this:

Head’s title

Body’s title

line begins

1

2

3

line ends

Sometimes the newline characters are printed, so your output may look like this as well:

“\n\nHead’s title\n\n\nBody’s title\nline begins\n 1\n2\n3\n line ends\n\n”

Now that we have a feel for how to use Beautiful Soup, let’s scrape a website!

Beautiful Soup in Action – Scraping a Book List

Now that we have mastered the components of Beautiful Soup, it’s time to put our learning to use. Let’s build a scraper to extract data from and save it to a CSV file. The site contains random data about books and is a great space to test out your web scraping techniques.

First, create a new file called Let’s import all the libraries we need for this script:

import requests

import time

import csv

In the modules mentioned above:

requests – performs the URL request and fetches the website’s HTML

time – limits how many times we scrape the page at once

csv – helps us export our scraped data to a CSV file

re – allows us to write regular expressions that will come in handy for picking text based on its pattern

bs4 – yours truly, the scraping module to parse the HTML

You would have bs4 already installed, and time, csv, and re are built-in packages in Python. You’ll need to install the requests module directly like this:

$ pip3 install requests

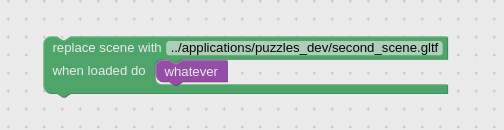

Before you begin, you need to understand how the webpage’s HTML is structured. In your browser, let’s go to. Then right-click on the components of the webpage to be scraped, and click on the inspect button to understand the hierarchy of the tags as shown below.

This will show you the underlying HTML for what you’re inspecting. The following picture illustrates these steps:

From inspecting the HTML, we learn how to access the URL of the book, the cover image, the title, the rating, the price, and more fields from the HTML. Let’s write a function that scrapes a book item and extract its data:

def scrape(source_url, soup): # Takes the driver and the subdomain for concats as params

# Find the elements of the article tag

books = nd_all(“article”, class_=”product_pod”)

# Iterate over each book article tag

for each_book in books:

info_url = source_url+”/”(“a”)[“href”]

cover_url = source_url+”/catalogue” + \

[“src”]. replace(“.. “, “”)

title = (“a”)[“title”]

rating = (“p”, class_=”star-rating”)[“class”][1]

# can also be written as: (“a”)(“title”)

price = (“p”, class_=”price_color”)()(

“ascii”, “ignore”)(“ascii”)

availability = (

“p”, class_=”instock availability”)()

# Invoke the write_to_csv function

write_to_csv([info_url, cover_url, title, rating, price, availability])

The last line of the above snippet points to a function to write the list of scraped strings to a CSV file. Let’s add that function now:

def write_to_csv(list_input):

# The scraped info will be written to a CSV here.

try:

with open(“”, “a”) as fopen: # Open the csv file.

csv_writer = (fopen)

csv_writer. writerow(list_input)

except:

return False

As we have a function that can scrape a page and export to CSV, we want another function that crawls through the paginated website, collecting book data on each page.

To do this, let’s look at the URL we are writing this scraper for:

”

The only varying element in the URL is the page number. We can format the URL dynamically so it becomes a seed URL:

“}”(str(page_number))

This string formatted URL with the page number can be fetched using the method (). We can then create a new BeautifulSoup object. Every time we get the soup object, the presence of the “next” button is checked so we could stop at the last page. We keep track of a counter for the page number that’s incremented by 1 after successfully scraping a page.

def browse_and_scrape(seed_url, page_number=1):

# Fetch the URL – We will be using this to append to images and info routes

url_pat = mpile(r”(. *\)”)

source_url = (seed_url)(0)

# Page_number from the argument gets formatted in the URL & Fetched

formatted_url = (str(page_number))

html_text = (formatted_url)

# Prepare the soup

soup = BeautifulSoup(html_text, “”)

print(f”Now Scraping – {formatted_url}”)

# This if clause stops the script when it hits an empty page

if (“li”, class_=”next”)! = None:

scrape(source_url, soup) # Invoke the scrape function

# Be a responsible citizen by waiting before you hit again

(3)

page_number += 1

# Recursively invoke the same function with the increment

browse_and_scrape(seed_url, page_number)

else:

scrape(source_url, soup) # The script exits here

return True

except Exception as e:

return e

The function above, browse_and_scrape(), is recursively called until the function (“li”, class_=”next”) returns None. At this point, the code will scrape the remaining part of the webpage and exit.

For the final piece to the puzzle, we initiate the scraping flow. We define the seed_url and call the browse_and_scrape() to get the data. This is done under the if __name__ == “__main__” block:

if __name__ == “__main__”:

seed_url = “}”

print(“Web scraping has begun”)

result = browse_and_scrape(seed_url)

if result == True:

print(“Web scraping is now complete! “)

print(f”Oops, That doesn’t seem right!!! – {result}”)

If you’d like to learn more about the if __name__ == “__main__” block, check out our guide on how it works.

You can execute the script as shown below in your terminal and get the output as:

$ python

Web scraping has begun

Now Scraping – Now Scraping – Now Scraping -…

Now Scraping – Now Scraping – Web scraping is now complete!

The scraped data can be found in the current working directory under the filename Here’s a sample the file’s content:

Light in the Attic, Three, 51. 77, In stock

the Velvet, One, 53. 74, In stock

stock

Good job! If you wanted to have a look at the scraper code as a whole, you can find it on GitHub.

Conclusion

In this tutorial, we learned the ethics of writing good web scrapers. We then used Beautiful Soup to extract data from an HTML file using the Beautiful Soup’s object properties, and it’s various methods like find(), find_all() and get_text(). We then built a scraper than retrieves a book list online and exports to CSV.

Web scraping is a useful skill that helps in various activities such as extracting data like an API, performing QA on a website, checking for broken URLs on a website, and more. What’s the next scraper you’re going to build?

Beautiful Soup 4.9.0 documentation – Crummy

Beautiful Soup is a

Python library for pulling data out of HTML and XML files. It works

with your favorite parser to provide idiomatic ways of navigating,

searching, and modifying the parse tree. It commonly saves programmers

hours or days of work.

These instructions illustrate all major features of Beautiful Soup 4,

with examples. I show you what the library is good for, how it works,

how to use it, how to make it do what you want, and what to do when it

violates your expectations.

This document covers Beautiful Soup version 4. 9. 3. The examples in

this documentation should work the same way in Python 2. 7 and Python

3. 8.

You might be looking for the documentation for Beautiful Soup 3.

If so, you should know that Beautiful Soup 3 is no longer being

developed and that support for it will be dropped on or after December

31, 2020. If you want to learn about the differences between Beautiful

Soup 3 and Beautiful Soup 4, see Porting code to BS4.

This documentation has been translated into other languages by

Beautiful Soup users:

这篇文档当然还有中文版.

このページは日本語で利用できます(外部リンク)

이 문서는 한국어 번역도 가능합니다.

Este documento também está disponível em Português do Brasil.

Эта документация доступна на русском языке.

Getting help¶

If you have questions about Beautiful Soup, or run into problems,

send mail to the discussion group. If

your problem involves parsing an HTML document, be sure to mention

what the diagnose() function says about

that document.

Here’s an HTML document I’ll be using as an example throughout this

document. It’s part of a story from Alice in Wonderland:

html_doc = “””

The Dormouse’s story

Once upon a time there were three little sisters; and their names were

Elsie,

Lacie and

Tillie;

and they lived at the bottom of a well.

…

“””

Running the “three sisters” document through Beautiful Soup gives us a

BeautifulSoup object, which represents the document as a nested

data structure:

from bs4 import BeautifulSoup

soup = BeautifulSoup(html_doc, ”)

print(ettify())

#

#

#

#

#

#

#

#

#

#

# Once upon a time there were three little sisters; and their names were

#

# Elsie

#

#,

#

# Lacie

# and

#

# Tillie

#; and they lived at the bottom of a well.

#…

#

#

Here are some simple ways to navigate that data structure:

#

# u’title’

# u’The Dormouse’s story’

# u’head’

soup. p

#

The Dormouse’s story

soup. p[‘class’]

soup. a

# Elsie

nd_all(‘a’)

# [Elsie,

# Lacie,

# Tillie]

(id=”link3″)

# Tillie

One common task is extracting all the URLs found within a page’s tags:

for link in nd_all(‘a’):

print((‘href’))

# # #

Another common task is extracting all the text from a page:

print(t_text())

#

# Elsie,

# Lacie and

# Tillie;

# and they lived at the bottom of a well.

Does this look like what you need? If so, read on.

If you’re using a recent version of Debian or Ubuntu Linux, you can

install Beautiful Soup with the system package manager:

$ apt-get install python-bs4 (for Python 2)

$ apt-get install python3-bs4 (for Python 3)

Beautiful Soup 4 is published through PyPi, so if you can’t install it

with the system packager, you can install it with easy_install or

pip. The package name is beautifulsoup4, and the same package

works on Python 2 and Python 3. Make sure you use the right version of

pip or easy_install for your Python version (these may be named

pip3 and easy_install3 respectively if you’re using Python 3).

$ easy_install beautifulsoup4

$ pip install beautifulsoup4

(The BeautifulSoup package is not what you want. That’s

the previous major release, Beautiful Soup 3. Lots of software uses

BS3, so it’s still available, but if you’re writing new code you

should install beautifulsoup4. )

If you don’t have easy_install or pip installed, you can

download the Beautiful Soup 4 source tarball and

install it with

$ python install

If all else fails, the license for Beautiful Soup allows you to

package the entire library with your application. You can download the

tarball, copy its bs4 directory into your application’s codebase,

and use Beautiful Soup without installing it at all.

I use Python 2. 7 and Python 3. 8 to develop Beautiful Soup, but it

should work with other recent versions.

Problems after installation¶

Beautiful Soup is packaged as Python 2 code. When you install it for

use with Python 3, it’s automatically converted to Python 3 code. If

you don’t install the package, the code won’t be converted. There have

also been reports on Windows machines of the wrong version being

installed.

If you get the ImportError “No module named HTMLParser”, your

problem is that you’re running the Python 2 version of the code under

Python 3.

If you get the ImportError “No module named ”, your

problem is that you’re running the Python 3 version of the code under

Python 2.

In both cases, your best bet is to completely remove the Beautiful

Soup installation from your system (including any directory created

when you unzipped the tarball) and try the installation again.

If you get the SyntaxError “Invalid syntax” on the line

ROOT_TAG_NAME = u'[document]’, you need to convert the Python 2

code to Python 3. You can do this either by installing the package:

$ python3 install

or by manually running Python’s 2to3 conversion script on the

bs4 directory:

$ 2to3-3. 2 -w bs4

Installing a parser¶

Beautiful Soup supports the HTML parser included in Python’s standard

library, but it also supports a number of third-party Python parsers.

One is the lxml parser. Depending on your setup,

you might install lxml with one of these commands:

$ apt-get install python-lxml

$ easy_install lxml

$ pip install lxml

Another alternative is the pure-Python html5lib parser, which parses HTML the way a

web browser does. Depending on your setup, you might install html5lib

with one of these commands:

$ apt-get install python-html5lib

$ easy_install html5lib

$ pip install html5lib

This table summarizes the advantages and disadvantages of each parser library:

Parser

Typical usage

Advantages

Disadvantages

Python’s

BeautifulSoup(markup, “”)

Batteries included

Decent speed

Lenient (As of Python 2. 7. 3

and 3. 2. )

Not as fast as lxml,

less lenient than

html5lib.

lxml’s HTML parser

BeautifulSoup(markup, “lxml”)

Very fast

Lenient

External C dependency

lxml’s XML parser

BeautifulSoup(markup, “lxml-xml”)

BeautifulSoup(markup, “xml”)

The only currently supported

XML parser

html5lib

BeautifulSoup(markup, “html5lib”)

Extremely lenient

Parses pages the same way a

web browser does

Creates valid HTML5

Very slow

External Python

dependency

If you can, I recommend you install and use lxml for speed. If you’re

using a very old version of Python – earlier than 2. 3 or 3. 2 –

it’s essential that you install lxml or html5lib. Python’s built-in

HTML parser is just not very good in those old versions.

Note that if a document is invalid, different parsers will generate

different Beautiful Soup trees for it. See Differences

between parsers for details.

To parse a document, pass it into the BeautifulSoup

constructor. You can pass in a string or an open filehandle:

with open(“”) as fp:

soup = BeautifulSoup(fp, ”)

soup = BeautifulSoup(“a web page“, ”)

First, the document is converted to Unicode, and HTML entities are

converted to Unicode characters:

print(BeautifulSoup(“Sacré bleu! “, “”))

# Sacré bleu!

Beautiful Soup then parses the document using the best available

parser. It will use an HTML parser unless you specifically tell it to

use an XML parser. (See Parsing XML. )

Beautiful Soup transforms a complex HTML document into a complex tree

of Python objects. But you’ll only ever have to deal with about four

kinds of objects: Tag, NavigableString, BeautifulSoup,

and Comment.

Tag¶

A Tag object corresponds to an XML or HTML tag in the original document:

soup = BeautifulSoup(‘Extremely bold‘, ”)

tag = soup. b

type(tag)

#

Tags have a lot of attributes and methods, and I’ll cover most of them

in Navigating the tree and Searching the tree. For now, the most

important features of a tag are its name and attributes.

Name¶

Every tag has a name, accessible as

If you change a tag’s name, the change will be reflected in any HTML

markup generated by Beautiful Soup:

= “blockquote”

tag

#

Extremely bold

Attributes¶

A tag may have any number of attributes. The tag has an attribute “id” whose value is

“boldest”. You can access a tag’s attributes by treating the tag like

a dictionary:

tag = BeautifulSoup(‘bold‘, ”). b

tag[‘id’]

# ‘boldest’

You can access that dictionary directly as

# {‘id’: ‘boldest’}

You can add, remove, and modify a tag’s attributes. Again, this is

done by treating the tag as a dictionary:

tag[‘id’] = ‘verybold’

tag[‘another-attribute’] = 1

#

del tag[‘id’]

del tag[‘another-attribute’]

# bold

# KeyError: ‘id’

(‘id’)

# None

Multi-valued attributes¶

HTML 4 defines a few attributes that can have multiple values. HTML 5

removes a couple of them, but defines a few more. The most common

multi-valued attribute is class (that is, a tag can have more than

one CSS class). Others include rel, rev, accept-charset,

headers, and accesskey. Beautiful Soup presents the value(s)

of a multi-valued attribute as a list:

css_soup = BeautifulSoup(‘

‘, ”)

css_soup. p[‘class’]

# [‘body’]

css_soup = BeautifulSoup(‘

‘, ”)

# [‘body’, ‘strikeout’]

If an attribute looks like it has more than one value, but it’s not

a multi-valued attribute as defined by any version of the HTML

standard, Beautiful Soup will leave the attribute alone:

id_soup = BeautifulSoup(‘

‘, ”)

id_soup. p[‘id’]

# ‘my id’

When you turn a tag back into a string, multiple attribute values are

consolidated:

rel_soup = BeautifulSoup(‘

Back to the homepage

‘, ”)

rel_soup. a[‘rel’]

# [‘index’]

rel_soup. a[‘rel’] = [‘index’, ‘contents’]

print(rel_soup. p)

#

Back to the homepage

You can disable this by passing multi_valued_attributes=None as a

keyword argument into the BeautifulSoup constructor:

no_list_soup = BeautifulSoup(‘

‘, ”, multi_valued_attributes=None)

no_list_soup. p[‘class’]

# ‘body strikeout’

You can use get_attribute_list to get a value that’s always a

list, whether or not it’s a multi-valued atribute:

t_attribute_list(‘id’)

# [“my id”]

If you parse a document as XML, there are no multi-valued attributes:

xml_soup = BeautifulSoup(‘

‘, ‘xml’)

xml_soup. p[‘class’]

Again, you can configure this using the multi_valued_attributes argument:

class_is_multi= { ‘*’: ‘class’}

xml_soup = BeautifulSoup(‘

‘, ‘xml’, multi_valued_attributes=class_is_multi) “, “xml”)

You probably won’t need to do this, but if you do, use the defaults as

a guide. They implement the rules described in the HTML specification:

from er import builder_registry

(‘html’). DEFAULT_CDATA_LIST_ATTRIBUTES

NavigableString¶

A string corresponds to a bit of text within a tag. Beautiful Soup

uses the NavigableString class to contain these bits of text:

# ‘Extremely bold’

type()

#

A NavigableString is just like a Python Unicode string, except

that it also supports some of the features described in Navigating

the tree and Searching the tree. You can convert a

NavigableString to a Unicode string with unicode() (in

Python 2) or str (in Python 3):

unicode_string = str()

unicode_string

type(unicode_string)

#

You can’t edit a string in place, but you can replace one string with

another, using replace_with():

(“No longer bold”)

# No longer bold

NavigableString supports most of the features described in

Navigating the tree and Searching the tree, but not all of

them. In particular, since a string can’t contain anything (the way a

tag may contain a string or another tag), strings don’t support the. contents or attributes, or the find() method.

If you want to use a NavigableString outside of Beautiful Soup,

you should call unicode() on it to turn it into a normal Python

Unicode string. If you don’t, your string will carry around a

reference to the entire Beautiful Soup parse tree, even when you’re

done using Beautiful Soup. This is a big waste of memory.

BeautifulSoup¶

The BeautifulSoup object represents the parsed document as a

whole. For most purposes, you can treat it as a Tag

object. This means it supports most of the methods described in

Navigating the tree and Searching the tree.

You can also pass a BeautifulSoup object into one of the methods

defined in Modifying the tree, just as you would a Tag. This

lets you do things like combine two parsed documents:

doc = BeautifulSoup(“

(text=”INSERT FOOTER HERE”). replace_with(footer)

# ‘INSERT FOOTER HERE’

print(doc)

#

#

Since the BeautifulSoup object doesn’t correspond to an actual

HTML or XML tag, it has no name and no attributes. But sometimes it’s

useful to look at its, so it’s been given the special

“[document]”:

Here’s the “Three sisters” HTML document again:

html_doc = “””

I’ll use this as an example to show you how to move from one part of

a document to another.

Going down¶

Tags may contain strings and other tags. These elements are the tag’s

children. Beautiful Soup provides a lot of different attributes for

navigating and iterating over a tag’s children.

Note that Beautiful Soup strings don’t support any of these

attributes, because a string can’t have children.

Navigating using tag names¶

The simplest way to navigate the parse tree is to say the name of the

tag you want. If you want the tag, just say

#

You can do use this trick again and again to zoom in on a certain part

of the parse tree. This code gets the first tag beneath the tag:

# The Dormouse’s story

Using a tag name as an attribute will give you only the first tag by that

name:

If you need to get all the tags, or anything more complicated

than the first tag with a certain name, you’ll need to use one of the

methods described in Searching the tree, such as find_all():

# Tillie]. contents and. children¶

A tag’s children are available in a list called. contents:

head_tag =

head_tag

ntents

# [

title_tag = ntents[0]

title_tag

# [‘The Dormouse’s story’]

The BeautifulSoup object itself has children. In this case, the

tag is the child of the BeautifulSoup object. :

len(ntents)

# 1

ntents[0]

# ‘html’

A string does not have. contents, because it can’t contain

anything:

text = ntents[0]

# AttributeError: ‘NavigableString’ object has no attribute ‘contents’

Instead of getting them as a list, you can iterate over a tag’s

children using the. children generator:

for child in ildren:

print(child)

# The Dormouse’s story. descendants¶

The. children attributes only consider a tag’s

direct children. For instance, the tag has a single direct

child–the

An HTML parser takes this string of characters and turns it into a

series of events: “open an tag”, “open a tag”, “open a