Browser Based Web Scraper

Web Scraper – The #1 web scraping extension

More than

400, 000 users are proud of using our solutions!

Point and click

interface

Our goal is to make web data extraction as simple as possible.

Configure scraper by simply pointing and clicking on elements.

No coding required.

Extract data from dynamic

web sites

Web Scraper can extract data from sites with multiple levels of navigation. It can navigate a

website on all levels.

Categories and subcategories

Pagination

Product pages

Built for the modern web

Websites today are built on top of JavaScript frameworks that make user interface easier to use but

are less accessible to scrapers. Web Scraper solves this by:

Full JavaScript execution

Waiting for Ajax requests

Pagination handlers

Page scroll down

Modular selector system

Web Scraper allows you to build Site Maps from different types of selectors.

This system makes it possible to tailor data extraction to different site structures.

Export data in CSV, XLSX and JSON

formats

Build scrapers, scrape sites and export data in CSV format directly from your browser.

Use Web Scraper Cloud to export data in CSV, XLSX and JSON formats, access it via API, webhooks or

get it exported via Dropbox.

Diego Kremer

Simply AMAZING. Was thinking about coding myself a simple scraper for a project

and then found this super easy to use and very powerful scraper. Worked

perfectly with all the websites I tried on. Saves a lot of time. Thanks for

that!

Carlos Figueroa

Powerful tool that beats the others out there. Has a learning curve to it but

once you conquer that the sky’s the limit. Definitely a tool worth making a

donation on and supporting for continued development. Way to go for the

authoring crew behind this tool.

Jonathan H

This is fantastic! I’m saving hours, possibly days. I was trying to scrap and old

site, badly made, no proper divs or markup.

Using the WebScraper magic, it somehow “knew” the pattern after I selected 2

elements. Amazing.

Yes, it’s a learning curve and you HAVE to watch the video and read the docs.

Don’t rate it down just because you can’t be bothered to learn it. If you put

the effort in, this will save your butt one day!

8 Best Web Scraping Tools – Learn – Hevo Data

Web Scraping simply is the process of gathering information from the Internet. Through Web Scraping Tools one can download structured data from the web to be used for analysis in an automated fashion.

This article aims at providing you with in-depth knowledge about what Web Scraping is and why it’s essential, along with a comprehensive list of the 8 Best Web Scraping Tools out there in the market, keeping in mind the features offered by each of these, pricing, target audience, and shortcomings. It will help you make an informed decision regarding the Best Web Scraping Tool catering to your business.

Table of Contents

Understanding Web ScrapingUses of Web Scraping ToolsFactors to Consider when Choosing Web Scraping ToolsTop 8 Web Scraping ToolsParseHubScrapyOctoParseScraper Content GrabberCommon CrawlConclusion

Understanding Web Scraping

Web Scraping refers to the extraction of content and data from a website. This information is then extracted in a format that is more useful to the user.

Web Scraping can be done manually, but this is extremely tedious work. To speed up the process you can use Web Scraping Tools that would be automated, cost less, and work more swiftly.

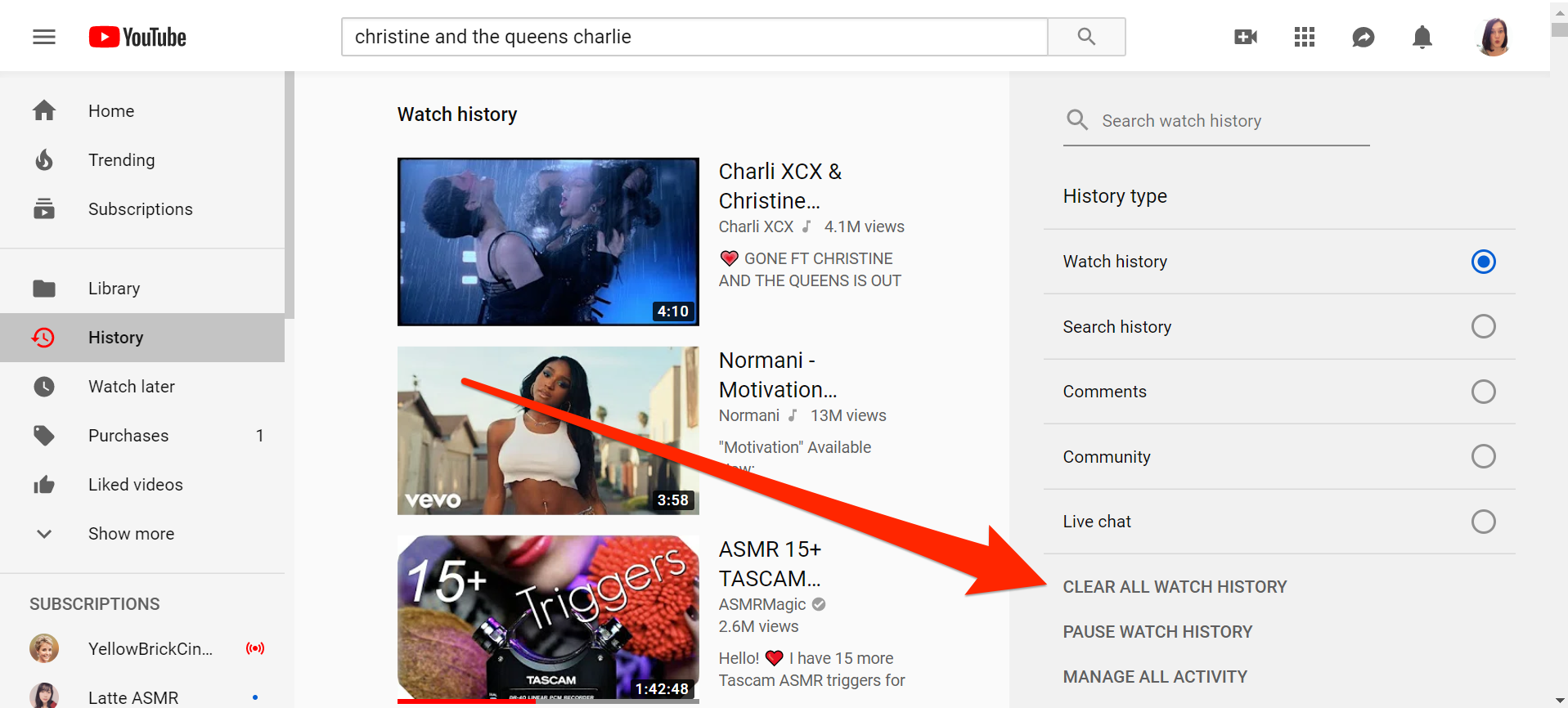

How does a Web Scraper work exactly?

First, the Web Scraper is given the URLs to load up before the scraping process. The scraper then loads the complete HTML code for the desired page. The Web Scraper will then extract either all the data on the page or the specific data selected by the user before running the nally, the Web Scraper outputs all the data that has been collected into a usable format.

Uses of Web Scraping Tools

Web Scraping Tools are used for a large number of purposes like:

Data Collection for Market ntact Information Tracking from Multiple Monitoring.

Factors to Consider when Choosing Web Scraping Tools

Most of the data present on the Internet is unstructured. Therefore we need to have systems in place to extract meaningful insights from it. As someone looking to play around with data and extract some meaningful insights from it, one of the most fundamental tasks that you are required to carry out is Web Scraping. But Web Scraping can be a resource-intensive endeavor that requires you to begin with all the necessary Web Scraping Tools at your disposal. There are a couple of factors that you need to keep in mind before you decide on the right Web Scraping Tools.

Scalability: The tool you use should be scalable because your data scraping needs will only increase with time. So you need to pick a Web Scraping Tool that doesn’t slow down with the increase in data demand. Transparent Pricing Structure: The pricing structure for the opted tool should be fairly transparent. This means that hidden costs shouldn’t crop up at a later stage; instead, every explicit detail must be made clear in the pricing structure. Choose a provider that has a clear model and doesn’t beat around the bush when talking about the features being Delivery: The choice of a desirable Web Scraping Tool will also depend on the data format in which the data must be delivered. For instance, if your data needs to be delivered in JSON format, then your search should be narrowed down to the crawlers that deliver in JSON format. To be on the safe side, you must pick a provider that provides a crawler that can deliver data in a wide array of formats. Since there are occasions where you may have to deliver data in formats that you aren’t used to. Versatility ensures that you don’t fall short when it comes to data delivery. Ideally, data delivery formats should be XML, JSON, CSV, or have it delivered to FTP, Google Cloud Storage, DropBox, etc. Handling Anti-Scraping Mechanisms: There are websites on the Internet that have anti-scraping measures in place. If you are afraid you’ve hit a wall with this, these measures can be bypassed through simple modifications to the crawler. Pick a web crawler that comes in handy in overcoming these roadblocks with a robust mechanism of its stomer Support: You might run into an issue while running your Web Scraping Tool and might need assistance to solve that issue. Customer support, therefore, becomes an important factor while deciding on a good tool. This must be the priority for the Web Scraping provider. With great customer support, you don’t need to worry about if anything goes wrong. You can bid farewell to the frustration that comes from having to wait for satisfactory answers with good customer support. Test the customer support by reaching out to them before making a purchase and note the time it takes them to respond before making an informed decision. Quality Of Data: As we discussed before, most of the data present on the Internet is unstructured and needs to be cleaned and organized before it can be put to actual use. Try looking for a Web Scraping provider that provides you the required tools to help with the cleaning and organizing of data that is scraped. Since the quality of data will impact analysis further, it is imperative to keep this factor in mind.

Hevo offers a faster way to move data from databases, SaaS applications and 100+ other data sources into your data warehouse to be visualized in a BI tool. Hevo is fully automated and hence does not require you to code.

Get Started with Hevo for FreeCheck out some of the cool features of Hevo:

Completely Automated: The Hevo platform can be set up in just a few minutes and requires minimal Data Transfer: Hevo provides real-time data migration, so you can have analysis-ready data always. 100% Complete & Accurate Data Transfer: Hevo’s robust infrastructure ensures reliable data transfer with zero data alable Infrastructure: Hevo has in-built integrations for 100+ sources that can help you scale your data infrastructure as required. 24/7 Live Support: The Hevo team is available round the clock to extend exceptional support to you through chat, email, and support Management: Hevo takes away the tedious task of schema management & automatically detects the schema of incoming data and maps it to the destination Monitoring: Hevo allows you to monitor the data flow so you can check where your data is at a particular point in time.

Sign up here for a 14-Day Free Trial!

Top 8 Web Scraping Tools

Choosing the ideal Web Scraping Tool that perfectly meets your business requirements can be a challenging task, especially when there’s a large variety of Web Scraping Tools available in the market. To simplify your search, here is a comprehensive list of 8 Best Web Scraping Tools that you can choose from:

ParseHubScrapyOctoParseScraper Content GrabberCommon Crawl

1. ParseHub

Image Source

Target Audience

ParseHub is an incredibly powerful and elegant tool that allows you to build web scrapers without having to write a single line of code. It is therefore as simple as simply selecting the data you need. ParseHub is targeted at pretty much anyone that wishes to play around with data. This could be anyone from analysts and data scientists to journalists.

Key Features of ParseHub

Clean Text and HTML before downloading to use graphical rseHub allows you to collect and store data on servers tomatic IP raping behind logic walls ovides Desktop Clients for Windows, Mac OS, is exported in JSON or Excel extract data from tables and maps.

ParseHub Pricing

ParseHub’s pricing structure looks like this:

Everyone: It is made available to the users free of cost. Allows 200 pages per run in 40 minutes. It supports up to 5 public projects with very limited support and data retention for 14 andard($149/month): You can get 200 pages in about 10 minutes with this plan, allowing you to scrap 10, 00 pages per run. With the Standard Plan, you can support 20 private projects backed by standard support with data retention of 14 days. Along with these features you also get IP rotation, scheduling, and the ability to store images and files in DropBox or Amazon ofessional($499/month): Scraping speed is faster than the Standard Plan(scrape up to 200 pages in 2 minutes) allowing you unlimited pages per run. You can run 120 private projects with priority support and data retention for 30 days plus the features offered in the Standard Plan. Enterprise(Open To Discussion): You can get in touch with the ParseHub team to lay down a customized plan for you based on your business needs, offering unlimited pages per run and dedicated scraping speeds across all the projects you choose to undertake on top of the features offered in the Professional Plan.

Shortcomings

Troubleshooting is not easy for larger output can be very limiting at times(not being able to publish complete scraped output).

2. Scrapy

Scrapy is a Web Scraping library used by python developers to build scalable web crawlers. It is a complete web crawling framework that handles all the functionalities that make building web crawlers difficult such as proxy middleware, querying requests among many others.

Key Features of Scrapy

Open Source Tool. Extremely well Extensible. Portable ployment is simple and reliable. Middleware modules are available for the integration of useful tools.

Scrapy Pricing

It is an open-source tool that is free of cost and managed by Scrapinghub and other contributors.

In terms of JavaScript support it is time consuming to inspect and develop the crawler to simulate AJAX/PJAX requests.

3. OctoParse

OctoParse has a target audience similar to ParseHub, catering to people who want to scrape data without having to write a single line of code, while still having control over the full process with their highly intuitive user interface.

Key Features of OctoParse

Site Parser and hosted solution for users who want to run scrapers in the and click screen scraper allowing you to scrape behind login forms, fill in forms, render javascript, scroll through the infinite scroll, and many more. Anonymous Web Data Scraping to avoid being banned.

OctoParse Pricing

Free: This plan offers unlimited pages per crawl, unlimited computers, 10, 00 records per export, and 2 concurrent local runs allowing you to build up to 10 crawlers for free with community support. Standard($75/month): This plan offers unlimited data export, 100 crawlers, scheduled extractions, Average speed extraction, auto IP rotation, task Templates, API access, and email support. This plan is mainly designed for small ofessional($209/month): This plan offers 250 crawlers, Scheduled extractions, 20 concurrent cloud extractions, High-speed extraction, Auto IP rotation, Task Templates, and Advanced API. Enterprise(Open to Discussion): All the pro features with scalable concurrent processors, multi-role access, and tailored onboarding are among the few features offered in the Enterprise Plan which is completely customized for your business needs.

OctoParse also offers Crawler Service and Data Service starting at $189 and $399 respectively.

If you run the crawler with local extraction instead of running it from the cloud, it halts automatically after 4 hours, which makes the process of recovering, saving and starting over with the next set of data very cumbersome.

4. Scraper API

Scraper API is designed for designers building web scrapers. It handles browsers, proxies, and CAPTCHAs which means that raw HTML from any website can be obtained through a simple API call.

Key Features of Scraper API

Helps you render to integrate. Geolocated Rotating Speed and reliability to build scalable web scrapers. Special pools of proxies for E-commerce price scraping, search engine scraping, social media scraping, etc.

Scraper API Pricing

Scraper API offers 1000 free API calls to start. Scraper API thereafter offers several lucrative price plans to pick from.

Hobby($29/month): This plan offers 10 Concurrent requests, 250, 000 API Calls, no Geotargeting, no JS Rendering, Standard Proxies, and reliable Email artup($99/month): The Startup Plan offers 25 Concurrent Requests, 1, 000, 000 API Calls, US Geotargeting, No JS Rendering, Standard Proxies, and Email ($249/month): The Business Plan of Scraper API offers 50 Concurrent Requests, 3, 000, 000 API Calls, All Geotargeting, JS Rendering, Residential Proxies, and Priority Email Support. Enterprise Custom(Open to Discussion): The Enterprise Custom Plan offers you an assortment of features tailored to your business needs with all the features offered in the other plans.

Scraper API as a Web Scraping Tool is not deemed suitable for browsing.

5. Mozenda

Mozenda caters to enterprises looking for a cloud-based self serve Web Scraping platform. Having scraped over 7 billion pages, Mozenda boasts enterprise customers all over the world.

Key Features of Mozenda

Offers point and click interface to create Web Scraping events in no quest blocking features and job sequencer to harvest web data in customer support and in-class account llection and publishing of data to preferred BI tools or databases ovide both phone and email support to all the scalable On-premise Hosting.

Mozenda Pricing

Mozenda’s pricing plan uses something called Processing Credits that distinguishes itself from other Web Scraping Tools. Processing Credits measures how much of Mozenda’s computing resources are used in various customer activities like page navigation, premium harvesting, image or file downloads.

Project: This is aimed at small projects with pretty low capacity requirements. It is designed for 1 user and it can build 10 web crawlers and accumulate up to 20k processing credits/month. Professional: This is offered as an entry-level business package that includes faster execution, professional support, and access to pipes and Mozenda’s apps. (35k processing credits/month)Corporate: This plan is tailored for medium to large-scale data intelligence projects handling large datasets and higher capacity requirements. ( 1 million processing credits/ month)Managed Services: This plan provides enterprise-level data extraction, monitoring, and processing. It stands out from the crowd with its dedicated capacity, prioritized robot support, and This is a secure self-hosted solution and is considered ideal for hedge funds, banks, or government and healthcare organizations who need to set up high privacy measures, comply with government and HIPAA regulations and protect their intranets containing private information.

Mozenda is a little pricey compared to the other Web Scraping Tools talked about so far with their lowest plan starting from $250/month.

6.

is best recommended for platforms or services that are on the lookout for a completely developed web scraper and data supplier for content marketing, sharing, etc. The cost offered by the platform happens to be quite affordable for growing companies.

Key Features of

Content Indexing is fairly fast. A dedicated support team that is highly Integration with different to use APIs providing full control for language and source and intuitive interface design allowing you to perform all tasks in a much simpler and practical structured, machine-readable data sets in JSON and XML access to historical feeds dating as far back as 10 ovides access to a massive repository of data feeds without having to bother about paying extra advanced feature allows you to conduct granular analysis on datasets you want to feed.

Pricing

The free version provides 1000 HTTP requests per month. Paid plans offer more features like more calls, power over the extracted data, and more benefits like image analytics, Geo-location, dark web monitoring, and up to 10 years of archived historical data.

The different plans are:-

Open Web Data Feeds: This plan incorporates Enterprise-level coverage, Real-Time Monitoring, Engagement Metrics like Social Signals and Virality Score along with clean JSON/XML Data Feed: The Cyber Data Feed plan provides the user with Real-Time Monitoring, Entity and Threat Recognition, Image Analytics and Geo-location along with access to TOR, ZeroNet, I2P, Telegram, etcArchived Web Data: This plan provides you with an archive of data dating back to 10 years, Sentiment and Entity Recognition, Engagement Metrics. This is a prepaid credit account pricing model.

The option for data retention of historical data was not available for a few were unable to change the plan within the web interface on their own, which required intervention from the sales team. Setup isn’t that simplified for non-developers.

7. Content Grabber

Content Grabber is a cloud-based Web Scraping Tool that helps businesses of all sizes with data extraction.

Key Features of Content Grabber

Web data extraction is faster compared to a lot of its you to build web apps with the dedicated API allowing you to execute web data directly from your can schedule it to scrape information from the web a wide variety of formats for the extracted data like CSV, JSON, etc.

Content Grabber Pricing

Two pricing models available for users of Content Grabber:-

Buying a licenseMonthly Subscription

For each you have three subcategories:-

Server($69/month, $449/year): This model comes equipped with a Limited Content Grabber Agent Editor allowing you to edit, run and debug agents. It also provides Scripting Support, Command-Line, and an API. Professional($149/month, $995/year): This model comes equipped with a Full-Featured Content Grabber Agent Editor allowing you to edit, run and debug agents. It also provides Scripting Support, Command-Line along with self-contained agents. However, this model does not provide an emium($299/month, $2495/year): This model comes equipped with a Full-Featured Content Grabber Agent Editor allowing you to edit, run and debug agents. It also provides Scripting Support, Command-Line along with self-contained agents and provides an API as well.

Prior knowledge of HTML and HTTP crawlers for previously scraped websites not available.

8. Common Crawl

Common Crawl was developed for anyone wishing to explore and analyze data and uncover meaningful insights from it.

Key Features of Common Crawl

Open Datasets of raw web page data and text pport for non-code based usage cases. Provides resources for educators teaching data analysis.

Common Crawl Pricing

Common Crawl allows any interested person to use this tool without having to worry about fees or any other complications. It is a registered non-profit platform that relies on donations to keep its operations smoothly running.

Support for live data isn’t pport for AJAX based sites isn’t data available in Common Crawl isn’t structured and can’t be filtered.

Conclusion

This blog first gave an idea about Web Scraping in general. It then listed the essential factors to keep in mind when making an informed decision about making a Web Scraping Tool purchase followed by a sneak peek at 8 of the best Web Scraping Tools in the market considering a string of factors. The main takeaway from this blog, therefore, is that in the end, a user should pick the Web Scraping Tools that suit their needs. Extracting complex data from a diverse set of data sources can be a challenging task and this is where Hevo saves the day!

Visit our Website to Explore HevoHevo, a No-code Data Pipeline helps you transfer data from a source of your choice in a fully automated and secure manner without having to write the code repeatedly. Hevo, with its secure integrations with 100+ sources & BI tools, allows you to export, load, transform, & enrich your data & make it analysis-ready in a jiffy.

Want to take Hevo for a spin? Sign Up for a 14-day free trial and experience the feature-rich Hevo suite first hand. You can also have a look at the unbeatable pricing that will help you choose the right plan for your business needs.

No-code Data Pipeline For Your Data Warehouse

About Price & Web Scraping Tools | Imperva

What is web scraping

Web scraping is the process of using bots to extract content and data from a website.

Unlike screen scraping, which only copies pixels displayed onscreen, web scraping extracts underlying HTML code and, with it, data stored in a database. The scraper can then replicate entire website content elsewhere.

Web scraping is used in a variety of digital businesses that rely on data harvesting. Legitimate use cases include:

Search engine bots crawling a site, analyzing its content and then ranking it.

Price comparison sites deploying bots to auto-fetch prices and product descriptions for allied seller websites.

Market research companies using scrapers to pull data from forums and social media (e. g., for sentiment analysis).

Web scraping is also used for illegal purposes, including the undercutting of prices and the theft of copyrighted content. An online entity targeted by a scraper can suffer severe financial losses, especially if it’s a business strongly relying on competitive pricing models or deals in content distribution.

Scraper tools and bots

Web scraping tools are software (i. e., bots) programmed to sift through databases and extract information. A variety of bot types are used, many being fully customizable to:

Recognize unique HTML site structures

Extract and transform content

Store scraped data

Extract data from APIs

Since all scraping bots have the same purpose—to access site data—it can be difficult to distinguish between legitimate and malicious bots.

That said, several key differences help distinguish between the two.

Legitimate bots are identified with the organization for which they scrape. For example, Googlebot identifies itself in its HTTP header as belonging to Google. Malicious bots, conversely, impersonate legitimate traffic by creating a false HTTP user agent.

Legitimate bots abide a site’s file, which lists those pages a bot is permitted to access and those it cannot. Malicious scrapers, on the other hand, crawl the website regardless of what the site operator has allowed.

Resources needed to run web scraper bots are substantial—so much so that legitimate scraping bot operators heavily invest in servers to process the vast amount of data being extracted.

A perpetrator, lacking such a budget, often resorts to using a botnet—geographically dispersed computers, infected with the same malware and controlled from a central location. Individual botnet computer owners are unaware of their participation. The combined power of the infected systems enables large scale scraping of many different websites by the perpetrator.

Malicious web scraping examples

Web scraping is considered malicious when data is extracted without the permission of website owners. The two most common use cases are price scraping and content theft.

Price scraping

In price scraping, a perpetrator typically uses a botnet from which to launch scraper bots to inspect competing business databases. The goal is to access pricing information, undercut rivals and boost sales.

Attacks frequently occur in industries where products are easily comparable and price plays a major role in purchasing decisions. Victims of price scraping can include travel agencies, ticket sellers and online electronics vendors.

For example, smartphone e-traders, who sell similar products for relatively consistent prices, are frequent targets. To remain competitive, they’re motivated to offer the best prices possible, since customers usually go for the lowest cost offering. To gain an edge, a vendor can use a bot to continuously scrape his competitors’ websites and instantly update his own prices accordingly.

For perpetrators, a successful price scraping can result in their offers being prominently featured on comparison websites—used by customers for both research and purchasing. Meanwhile, scraped sites often experience customer and revenue losses.

Content scraping

Content scraping comprises large-scale content theft from a given site. Typical targets include online product catalogs and websites relying on digital content to drive business. For these enterprises, a content scraping attack can be devastating.

For example, online local business directories invest significant amounts of time, money and energy constructing their database content. Scraping can result in it all being released into the wild, used in spamming campaigns or resold to competitors. Any of these events are likely to impact a business’ bottom line and its daily operations.

The following is excerpted from a complaint, filed by Craigslist, detailing its experience with content scraping. It reinforces how damaging the practice can be:

“[The content scraping service] would, on a daily basis, send an army of digital robots to craigslist to copy and download the full text of millions of craigslist user ads. [The service] then indiscriminately made those misappropriated listings available—through its so-called ‘data feed’—to any company that wanted to use them, for any purpose. Some such ‘customers’ paid as much as $20, 000 per month for that content…”

According to the claim, scraped data was used for spam and email fraud, among other activities:

“[The defendants] then harvest craigslist users’ contact information from that database, and initiate many thousands of electronic mail messages per day to the addresses harvested from craigslist servers…. [The messages] contain misleading subject lines and content in the body of the spam messages, designed to trick craigslist users into switching from using craigslist’s services to using [the defenders’] service…”

Web scraping protection

The increased sophistication in malicious scraper bots has rendered some common security measures ineffective. For example, headless browser bots can masquerade as humans as they fly under the radar of most mitigation solutions.

To counter advances made by malicious bot operators, Imperva uses granular traffic analysis. It ensures that all traffic coming to your site, human and bot alike, is completely legitimate.

The process involves the cross verification of factors, including:

HTML fingerprint – The filtering process starts with a granular inspection of HTML headers. These can provide clues as to whether a visitor is a human or bot, and malicious or safe. Header signatures are compared against a constantly updated database of over 10 million known variants.

IP reputation – We collect IP data from all attacks against our clients. Visits from IP addresses having a history of being used in assaults are treated with suspicion and are more likely to be scrutinized further.

Behavior analysis – Tracking the ways visitors interact with a website can reveal abnormal behavioral patterns, such as a suspiciously aggressive rate of requests and illogical browsing patterns. This helps identify bots that pose as human visitors.

Progressive challenges – We use a set of challenges, including cookie support and JavaScript execution, to filter out bots and minimize false positives. As a last resort, a CAPTCHA challenge can weed out bots attempting to pass themselves off as humans.

Learn more about protecting your site from malicious bot traffic with Imperva’s bot management solution.

Frequently Asked Questions about browser based web scraper

What is the best tool for web scraping?

Top 8 Web Scraping ToolsParseHub.Scrapy.OctoParse.Scraper API.Mozenda.Webhose.io.Content Grabber.Common Crawl.Feb 6, 2021

What is browser scraping?

Web scraping is the process of using bots to extract content and data from a website. … The scraper can then replicate entire website content elsewhere. Web scraping is used in a variety of digital businesses that rely on data harvesting.

How can I scrape data from a website for free?

Besides that, the cloud service will allow you to store and retrieve the data at any time.ParseHub.Data Scraper (Chrome)Web scraper.Scraper (Chrome)Outwit hub(Firefox)Dexi.io (formerly known as Cloud scrape)Webhose.io.Aug 3, 2021